|

9/27/2022 Do’s and Don’ts Regarding How To Assess Scientific Studies in the Age of the Internet and Social MediaRead Now A positive aspect of the advent of the internet is that scientific studies can be made public as soon as they are ready to be published. However, these studies are highly technical publications that are intended for scientists to study and analyze. Thus, one negative effect of greater accessibility to the scientific literature is that individuals without the education and technical knowledge necessary to evaluate the studies can now gain access to them. As a result, these individuals may disseminate in their blogs, podcasts, social media, and other outlets erroneous claims about these studies either because they misunderstood them, or because they may have an agenda directed at favoring certain interpretations of the studies, even if these interpretations are not supported by the data. I have lost track of the number of times I have seen someone on Twitter making claims about some issue by citing the latest published scientific article. Invariably the purpose of the individuals making these claims is not to discover or debate the truth, but rather to support their political or social agendas. I have tried to explain that truth in science is not established by one or even a few studies, even if they are published in peer-reviewed journals. Scientists have to debate the merits and flaws of each other’s studies, and this is a process that will take time. During this process scientists may make claims that they may later recant when more evidence becomes available, or a study that was heralded as a good study may fall in disfavor if it is realized that certain variables that turn out to be important were not controlled. But when scientists do these normal things that are part of the scientific process, they are accused of flip-flopping or selling out to special interests. The above process is amplified by various types of media which reach millions of people and contribute to create confusion and suspicion when people see narratives change. I saw this happen with hydroxychloroquine. A study would come out indicating hydroxychloroquine (HCQ) had an effect against COVID-19, and all the HCQ proponents would brag about how the issue was settled and HCQ worked. Then another study would come out showing that HCQ did not have an effect, and all the HCQ critics would claim HCQ did not work. In the middle of the storm, certain responsible scientists or organizations would comment about the studies pointing out flaws or strengths, and they would be denounced by the pro or con side. Eventually enough studies accumulated, and they showed not only that HCQ does not work against COVID-19, but also why it does not work. However, by then HCQ had lost its appeal as a political issue. I have seen this happening again with the drug ivermectin. A study came out of Brazil using a population of 88,012 subjects where ivermectin brought about a reduction of 92% in COVID-19 mortality rate. The pro-ivermectin crowd declared victory, bragged about how they had been right all along, and pointed out that the withholding of ivermectin had led to many preventable deaths from COVID-19. The truth, however, was very different. This was an observational study where the allocation of patients to treatments was not randomized, which can lead to serious biases in the data. And while a sample size of 88,012 subjects sounds impressive, the actual comparisons were performed on much smaller subsets. For example, the 92% result came from comparing 283 ivermectin users to 283 non-users. Additional problems involved the exclusion of a large number of subjects and the non-control of ivermectin use. Finally, there is no way that an effect of such a large magnitude (92%) would not have been detected when performing better designed and controlled trials, but that has not been the case. As I have pointed out before, the politization of science creates a caustic environment where the work of scientists is mischaracterized or attacked by unscrupulous individuals, and this makes the process of science much harder than it already is. To avoid all the problems mentioned above, I have put together a list of do’s and don’ts regarding how to assess scientific studies in the age of the internet and social media. 1) Do listen to what scientists have to say about the studies. They are experts in their field, and an expert is called that for a reason. They have studied many years and trained to do what they are doing. Do not assume you know more than the experts. Do not merely quote a study in your blog or social media to defend a position. Rather, do report on the debate among scientists regarding the strengths and weaknesses of the study and identify unanswered questions. 2) Do give scientists the time to evaluate and debate the studies and to reassess the studies as more information becomes available. Do not attack scientists for changing their minds. 3) To make up your mind, do wait for several studies to accumulate and for the majority of scientists to reach a consensus regarding the studies. However, this consensus will not be arrived at based on the total number of studies, but rather on their quality. One study of good quality can be more meaningful than dozens of low-quality studies, and the community of scientists (not a single scientist) is the ultimate arbiter regarding the quality of the studies. 4) Do not judge scientists or the results from their studies by their affiliations to companies or other organizations. The studies have to be judged on their merits. Do not make offhand claims that conflicts of interest have corrupted the science if you don’t have any evidence for it. Hearsay, innuendo, and ignorance are not proof of anything. 5) Success in science is measured by the ability of scientists to convince their peers. Scientists who have been unable to convince their peers and who bypass the normal scientific process to take their case to “the people” are a huge red flag. Do not blindly trust the renegade scientists who claim they are ignored by their peers. These scientists are often ignored because their studies are deficient and their ideas are unconvincing. 6) Do not defend and promote a scientific claim just because a celebrity or politician whom you trust or like has endorsed it. Endorsement by a non-scientist of a scientific claim without any hard evidence is irrelevant to the scientific debate. Science is not politics. If everyone follows these guidelines, we can hopefully restore a measure of rationality to the scientific discourse among the public. Image by Tumisu from pixabay is free for commercial use and was modified.

2 Comments

10/31/2020 Employing the Timeline and Cross-Sectional Methods to Study the Change in the Color of Leaves During AutumnRead NowScientists often need to evaluate how a group of entities changes over time in response to some natural or man-made processes. Such entities can be the animals or plants belonging to a species, or they can be non-living things such as the glaciers, rivers, or mountains in a given area. To do this, scientists could try to study all the entities they want to evaluate, but this is often too costly, impractical, or impossible. So to make possible this evaluation, scientists may use one of two methods. I will illustrate these methods by applying them to the study of the change in leaf color during the fall. The Timeline Method The first method is the timeline method. This method involves following some entities over time during the period of interest and documenting how they change. The idea is then that the change of the overall population or group can be extrapolated from the change of the particular entities that are followed. I used the timeline method to document how the leaves changed during one week in the autumn in a maple tree in my neighborhood. The genus of maples is Acer, but I don’t know the species of this particular tree. The color of the majority of the leaves of the tree had already begun to change and many had already fallen. I selected several of the low lying leaves that were still mostly green, wrapped the stems with tape, assigned them a number, and photographed them. Over the next six days I came back more or less at the same time of day taking pictures of the leaves I had selected. During those 6 days the tree lost most of its leaves as can be seen below. I show below the change in leaf color of six leaves on the tree over the observation period of six days. Each column corresponds to one day. Some of the leaves fell between days 5 and 6, but I was able to retrieve them from the ground and photograph them. You can see that the leaves change color at different rates and the transition is far from homogeneous. In some cases there are spots of intense red color that appear and then spread, whereas in other cases the green over an area of the leaves fades and is replaced by a diffuse red. The green color is, of course, the pigment chlorophyll which is degraded during the fall. In the particular case of maples, as chlorophyll is degraded, the leaves produce another pigment called anthocyanin which is responsible for the red color and may play a protective role during leaf senescence. The timeline method will work best if the entities under study and the way they change are representative of the overall population under study. As the leaves I chose to study were the most accessible ones at the bottom of the tree, they may not be wholly representative of the changes in the leaves at the top of the tree. Other differences may be caused by variables such as disease, amount of available sunlight, etc. Ideally I should have taken a larger sample from leaves in different parts of the tree at several heights and evaluated them over time. The Cross-Sectional Method As you saw above, at any given time there are a number of leaves at different stages of their color changing process. This fact allows the application of the cross-sectional method. To apply this method, I photographed several leaves in the first day (a cross section in time) and using these leaves I put together a possible sequence of leaf color change that describes the overall process as shown below from top left to bottom right. The cross-sectional method involves much less work than the timeline method, and is intended to be an overall representation of the change of leaf color in the tree. However, care must be taken in selecting the individual leaves to put together the representative leaf change sequence. There are some obvious differences between the sequence I put together using the cross-sectional method and the changes displayed by the individual leaves using the timeline method. In particular, the appearance of the red color and its boundary in the cross-sectional sequence seems to be more vivid, sharper, and circumscribed than that displayed by the change in most of the individuals leaves I studied. If I repeat this study, I have to be more careful in my methodology and select a wider variety of leaves. The timeline and cross-sectional methods have many applications in science. An example of an application of the timeline method in the present is using radio-tracking technology (nowadays improved by satellite and GPS systems) and genetic monitoring to study movements of wildlife populations and the way they change over time. But scientists can also use the timeline method to study living things in the past. Because living things changing through time leave a sequential record of their change in rocks (fossils) and also to a certain extent in the stages of an embryo’s development, scientists can use fossils and embryology to piece together how organisms evolved over millions of years. The timeline method can also be applied to study how non-living things changed in the past. For example, scientists have measured the concentration of carbon dioxide (CO2) trapped in air bubbles inside the ice of the polar caps going back hundreds of thousands of years, obtaining important information regarding how this relates to atmospheric temperatures and global warming. Ideally the timeline method is preferable to the cross-sectional method, but the cross-sectional method is sometimes the method of choice if there are restrictions in time or resources available for the study, or in the case of processes that occur over very long intervals of time without leaving any records. Studying the genes or proteins of living organisms and comparing them to each other to figure out their interrelationships is an application of the cross-sectional method. A remarkable application of the cross-sectional method is the study of galaxy collisions. These events take billions of years, so it’s impossible to follow them over time. To study galaxy collisions, astronomers photograph galaxies in different stages of collision (cross section) and write programs to explain the different stages they observe in the process as described in the video below. The timeline and the cross-sectional methods allow scientists to peer back in time and uncover the changes that took place in the past and how they shaped the present, and to uncover which changes are taking place in the present and how they may shape the future. The photographs belong to the author and can only be used with permission.  I have read many social media posts during the COVID-19 pandemic by people that claim to be frustrated and confused with scientists. They say that first it was “masks are not necessary” and now it’s “wear masks”. First millions were projected to die, and then tens of thousands. A test for COVID-19 is put out, but then it doesn’t work. Articles are published showing hydroxychloroquine is dangerous and clinical trials are paused, but then then the articles are retracted, and the trials are continued. Hydroxychloroquine is authorized to be used, and now no. These people claim that they are tired of flip-flops, dithering, and mistakes. Some are even arguing that these happenstances are not random, and that they represent a pattern which is part of a conspiracy by scientists aligned with pharmaceutical companies, the left, the World Health Organization, and the Gates Foundation to scare US citizens, impose on them, deprive them of cheap effective therapies, and take away their freedoms. The above reaction is not unexpected. We know that belief in conspiracy theories greatly increases in times of social upheaval that generate uncertainty, stress, and anxiety. But apart from that, a lot of the exasperation and confusion some people have with scientists and the scientific process stems from unrealistic expectations regarding what science is, what scientists do, and how they do it, coupled to the realities of doing research in the current environment. So let me set the record straight. The history of science is awash in stories where scientists have made mistakes, flip-flopped on theories, and retracted articles in their pursuit of truth. Some of these mistakes occurred because of faulty data, technology, or procedures, and other mistakes involved scientists having wrong ideas. Normally scientists have time to sort out all these mistakes and methodological issues over the course of a few years (or in some cases decades), and they can come up with a reasonably robust approximation to the truth. This is a messy process with a lot of uncertainty, missteps, and blind alleys, but most of the time this process happens away from the limelight. When most folk come into contact with scientific discovery, what they see is the end product of this process, the tip of the iceberg if you will, and they are usually oblivious to all the blood sweat and tears that has taken to get there.

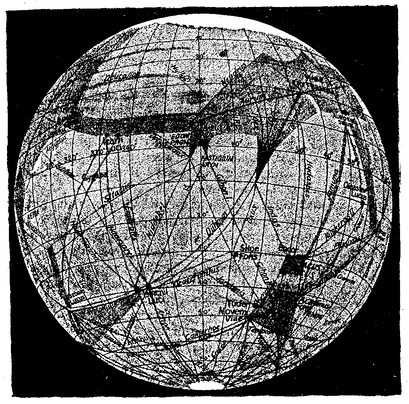

So, take this scientific process with all of its imperfections, mistakes, and uncertainties, and put it in the middle of a pandemic where people are dying and society needs answers, tests, therapies, and guidelines, NOW! You have just rushed the scientific process tenfold, and you have also magnified the potential for screw-ups by the same amount. Add to this the fact that COVID-19 research, from vaccines to hydroxychloroquine, has been politicized, and that every relevant announcement is interpreted in favor or against something and amplified orders of magnitude by the news cycle and social media. Finally, factor in that the general public is being exposed on a daily basis to the process of science in this difficult environment but often filtered through the sieves of partisan pundits that distort the science. What do you get? What you get is one sad toxic mess. Very preliminary or questionable research is catapulted to the forefront of public attention and presented as the truth, whereas solid research is nit-picked to find flaws in order to dismiss it. Honest mistakes or changes of opinion by scientists are interpreted in the worst possible way to question the trustworthiness of the individuals involved. Many excellent scientists doing what they’ve always done the way they’ve always done it are now portrayed at best as Keystone Cops or at worst as sinister characters of questionable morals whose motives behind their actions are divorced from the well-being of the public. No wonder some people are frustrated and confused with science and scientists! But my message to these people is the following: Don’t concentrate on the PROCESS of science. Focus on the PROGRESS of science. Progress? Yes, progress! Science and scientists have made an immense amount of progress during the COVID-19 pandemic. Scientists have isolated and sequenced the genetic material of the virus and generated a huge amount of information that has been used (among other things) to produce vaccines that are being tested for safety and efficacy, to identify strains of the virus which allow us to trace its spread, to generate diagnostic tests to detect viral infection in individuals, and even to detect the virus in sewage in order to identify which communities may have a rising caseload! At no time in the history of infectious diseases has progress happened so fast. Contrary to what was initially believed, scientists have discovered that the SARS-CoV-2 virus (which causes the disease COVID-19) can infect many cell types once it has gained access to the body through the airways, including those of the lining of blood vessels, and this has changed the way we view the disease, saving and improving the lives of patients. For example, one of the problems with seriously sick patients is blood clots, and the use of anticoagulants is helping to save lives. Scientists have also tested several drugs against COVID-19, and have found steroids to be effective at reducing mortality in the sickest patients. Other approaches that may also be useful, and are being tested, are the drug remdesivir and convalescent plasma. After being ambivalent with regards to masks, scientists discovered that a large amount of COVID-19 transmission occurs from people that are asymptomatic or presymptomatic. This discovery led to the issuing of the guideline to wear masks, which along with social distancing and other mitigation measures have bought us time to better understand the virus and saved lives. At the level of the hospitals, doctors integrated domestic and international experiences to develop better ways of preparing for a surge in hospitalizations to handle large amounts of patients. They also recognized that delaying ventilation and placing patients on ventilation in the prone position could improve their odds of surviving. The PROCESS of science can be messy and confusing, and now that it is out in the open on a daily basis, it is being presented to the general public in the worst possible light by many people interested in disavowing science or pursuing their own agendas. When it comes to science, try to understand that scientists are human. They have disagreements, they make mistakes, and they change their minds. You may not understand all the technical terms and issues and all the back and forth among scientists and what’s true and what isn’t. But the PROGRESS of science is a different matter. Science has delivered for us in the past, science is delivering for us now, and science will continue to deliver for us in the future. Concentrate on the end result, on what scientists have achieved. Progress over Process! Progress sign by Nick Youngson from Alpha Stock Images was modified and is used here under an Attribution-ShareAlike 3.0 Unported (CC BY-SA 3.0) license. Coronavirus image by Alissa Eckert, MS; Dan Higgins, MAM, from the CDC's Public Health Image Library is in the public domain and was modified.  There is a joke that illustrates misguided science thinking. A man is in a public area screaming at the top of his lungs. When somebody comes over and enquires why he is screaming, the man replies, “To keep away rogue elephants”. When told that there are no elephants for miles around, the man replies that this is proof his screaming is working. If you think that is silly, consider the following recipe for obtaining mice by spontaneous generation (in other words, without the need for male and female mice): Place a soiled shirt into the opening of a vessel containing grains of wheat. Within 21 days the reaction of the leaven in the shirt with the fumes from the wheat will produce mice. No, I’m not kidding you! For many centuries some of the best minds in humanity believed that life could regularly arise spontaneously from nonlife or at least from unrelated organisms. Some people went as far as outlining procedures to achieve this, such as the one presented above to generate mice supplied by the chemist Jean Baptiste van Helmont. Presumably, this individual (who is no stranger to misguided scientific thinking) placed a shirt in a vessel with grains of wheat, and when he checked 21 days later he saw a mouse scurrying away. Therefore, he concluded that the mix of the wheat and the shirt in the vessel produced the mouse. What the above examples of misguided science have in common is the ignoring of alternative explanations. In the case of the joke, the alternative explanation obviously is that there are no rogue elephants nearby to begin with. In the other case, the alternative explanation is that the mouse came from elsewhere, as opposed to arising from the shirt with the wheat. The best scientific experiment is one carried out in such a manner that alternative explanations for the experimental results are minimized or ruled out altogether. The ideal scientific experiment should only have one possible explanation for the outcome. In order to achieve this, scientists use controls. A control is an element of the experimental design that allows for the control of variables that could otherwise affect the outcome of the experiment. In the case of the joke, the man could stop screaming to see if rogue elephants show up, or he could scream next to an actual elephant to see if screaming works. In the case of the real example, van Helmont could have placed a barrier around the shirt with the wheat to rule out that mice came from the outside. The use of controls in experiments is so commonplace nowadays, that it is really hard to imagine how anyone could even think of performing an experiment without them. However, the modern universal notion of controls as a way of controlling variables to rule out alternative explanations to experimental results did not begin to take shape until the second half of the 19th century, even though scholars claim that strategies to make observations or experiments yield valid results go back further in time to the Middle Ages or even antiquity. In science, the controls that are most often used are those involving outside variables that can affect the results of an experiment. However, the more important and more challenging variables to control are those that arise from within the experimenter.  Illustration of the Canals on Mars by Percival Lowell Illustration of the Canals on Mars by Percival Lowell If the procedure to make an observation or evaluate the results of an experiment depends on a subjective judgement made by the experimenter, as opposed to, for example, a reading made by a machine, then subtle (and not so subtle) psychological factors can influence the result depending on the biases of the experimenter. I have already mentioned the famous case of the scientist René Blondlot who in 1903 announced to the world he had discovered a new form of radiation (N-rays), but it turned out that such radiation existed only in his imagination. Another famous case was that of astronomer Percival Lowell who, at the turn of the 19th century, thought he saw canals on Mars which he considered to be evidence of an advanced civilization. He was a great popularizer of science and he wrote several books about Mars and its inhabitants based on his observations, but the whole thing turned out to be a delusion. To avoid this type of mistake, many experiments that rely on subjective assessments employ a protocol where the observer does not know which groups received which treatments. This is called a blind experimental design.

An additional challenge occurs when a scientists works with human subjects. Psychological factors can have a potent role in influencing the results of medical experiments. Depending on the disease, if patients are convinced that they are receiving an effective treatment, and that their condition will improve, said patients can display remarkable improvements in their health even if they have received no effective treatment at all. To rule out the effect of these psychological factors, scientist performing clinical trials include groups of patients treated with placebos. Placebos are fake pills designed to mimic the actual pill containing the active chemical substance or ineffective procedures designed to mimic the actual medical procedure in such way that the patient cannot tell the difference. To also rule out the influence of psychological factors arising from the doctors giving patients in one group a special treatment, the identity of these groups are hidden from the clinicians. Clinical trials which employ placebos and where both the patients and the clinicians don’t know the identity of the treatments, are called double-blind placebo-controlled trials, and they are the most effective and also the most complex forms of controls. Unfortunately, the use of controls is not always straightforward. I have already mentioned the case of the discovery of polywater which was heralded as a new form of water with intriguing properties that promised many interesting practical applications until someone implemented a control and demonstrated that it was the result of contamination. Sometimes scientists do not know all the variables affecting an experiment, or they may underestimate the effect of a variable that they have deemed irrelevant, or they may misjudge the extent to which their emotional involvement in conducting the experiment may compromise the results. Designing the adequate controls into experimental protocols requires not only discipline, discernment, and smarts, but sometimes also just luck. In fact, some scientists would argue that implementing effective controls is not a science but an art. The art of the control! The Elephant cartoon from PixaBay is free for commercial use. The Illustration of Mars and its canals by Percival Lowell is in the public domain. Most scientists have it easy. By this I don’t mean that science is easy, but rather that scientists experiment on animals or OTHER people. Sure, these experiments are conducted following ethical guidelines to minimize pain to laboratory animals or to ensure the safety of patients, but the point of my argument is that it’s easy to administer a treatment that makes the entity receiving said treatment sick, or that carries other risks, when that entity is not yourself. However, throughout the history of science some scientists have broken through the wall of security that separated them from their test subjects and became their own lab rats, their own patients. These scientists who experimented on themselves conducted what I call “heroic science”. Let’s look at some of these characters.  Barry Marshall Barry Marshall In a previous post, I mentioned the case of the Australian physician Dr. Barry Marshall who wanted to convince skeptical fellow scientists that ulcers were not caused by excessive stomach acid secretion due to stress, but rather by a bacteria called Helicobacter pylori. Unable to develop an animal model or to obtain funds to perform a human study, he experimented on himself by drinking a broth containing Helicobacter Pylori isolated from a patient who had developed severe gastritis. He developed the same symptoms as the patient and was able to cure himself with antibiotics. As a result of this and other studies, Dr. Marshall was awarded a Nobel Prize in 2005. Another of these heroic individuals was Werner Forssmann, who as a resident in cardiology in a German hospital wanted to try a procedure to insert a catheter through a vein all the way to the heart. Forssmann was convinced that if this could be done, it would allow doctors to diagnose and treat heart ailments. However, he could not obtain permission from his superiors to perform the experiment on a patient, so he tried it on himself. He made an incision in his arm, inserted a tube, and guiding himself with X-ray photography, he pushed the tube all the way to his heart. At the time there was a lot of opposition to Forssmann and his unorthodox methods, and although he persevered for some time, he became a pariah in the cardiology field and was forced to switch disciplines becoming a urologist. However, eventually other scientists refined the technique of catheterization described in his work, and developed it into valuable medical procedures that have saved many lives. Forssmann had the last laugh when he was awarded the Nobel Prize in 1956. Most heroic science studies didn’t lead to a Nobel Prize, but some resulted in useful information. For example, John Stapp was an air force officer who experimented on himself to test the limits of human endurance in acceleration and deceleration experiments. He would be strapped to a rocket that would rapidly accelerate to speeds of hundreds of miles per hour and then stop within seconds. As a result of these brutal experiments, Stapp suffered concussions and broke several bones, but he survived, and the knowledge generated by his research eventually resulted in technologies and guidelines that today protect both car drivers and airplane pilots. Despite its appearance of recklessness, heroic science is seldom performed in a vacuum, but rather it is performed by individuals who believe that, based on other evidence, nothing will happen to them.  Joseph Goldberger Joseph Goldberger Such was the case of Dr. Joseph Goldberger. In the early 1900s, the disease Pellagra afflicted tens of thousands of people in the United States. Dr. Joseph Goldberger performed experiments that indicated that Pellagra was a disease that arose due to a dietary deficiency rather than a germ. Faced with recalcitrant opposition to his ideas, Goldberger and his assistants injected themselves with blood from people afflicted with Pellagra and applied secretions from the patient’s noses and throats to their own. They also held “filth parties” where they swallowed capsules containing scabs obtained from the rashes that patients with Pellagra developed. None of them developed Pellagra. This along with other evidence demonstrated that Pellagra was not a disease carried by germs. Another case was that of the American surgeon Nicholas Senn, who in 1901 implanted under his skin a piece of cancerous tissue that he had just removed from a patient. As he expected, he never developed cancer. Senn did this to demonstrate that cancer is not produced by a microbe, as it is not transmissible from one human to another, although at the time there were many pieces of evidence that taken together indicated that this was the case. Of course, the mere fact that you perform heroic science doesn’t mean that you will reach the right conclusions.  Max von Pettenkofer Max von Pettenkofer Back in 1892 the German chemist Dr. Max von Pettenkofer disputed the theory that germs caused disease, and specifically that a bacterium called Vibrio cholerae caused the disease cholera. He requested a sample of cholera bacteria from one of the most prominent proponents of the theory, Dr. Robert Koch (who won the Nobel Prize in 1905), and when he got the sample he proceeded to ingest it! Pettenkofer fell slightly sick for a while, but did not develop cholera. He claimed that this proved his point, but the vast majority of the evidence generated by others indicated he was wrong, and his claim was never accepted. A rather remarkable example of misguided heroic science is the work of Doctor Stubbins Ffirth. This individual studied the incidence of Yellow Fever cases in the United States back in the 1700s and noticed that Yellow Fever was much more prevalent during the summer months. Thus he developed the notion that Yellow fever was due to the heat stress of the summer months, and that therefore it was not contagious. To prove this he embarked on a series of gross experiments where he exposed himself to the bodily fluids of Yellow Fever patients. He drank their vomit, he poured it in his eyes, he rubbed it into cuts he made in his arms, he breathed the fumes from the vomit, and he also smeared his body with urine, saliva, and blood of Yellow Fever patients. Since he never contracted the malady, he concluded that Yellow Fever was not contagious. However, not only did Ffirth employ bodily fluids from late-stage Yellow Fever patients whose disease we now know not to be contagious, but he also missed the fact that Yellow Fever is transmitted by mosquitoes (see below), which is the reason why it’s more prevalent during the summer! And finally, some of the scientists who engaged in heroic science suffered or died as a result of their experiments, but their sacrifice saved lives or resulted in advancements in the understanding of terrible diseases as the following two cases show.  Jesse Lazear Jesse Lazear In 1900 the army surgeon Walter Reed and his team in Cuba put to test the theory that Yellow fever was spread by mosquitoes, which at the time was not taken seriously by many scientists. They had mosquitoes feed on patients with Yellow Fever and then allowed the mosquitoes to bite several volunteers, among whom were two members of Reed’s team, the American physicians James Carroll and Jesse Lazear. Several of the people bitten by these mosquitoes developed Yellow Fever including Carroll and Lazear. Lazear died, but Carroll recovered, although he experienced ill health for the rest of his life. After this demonstration that Yellow Fever was transmitted by mosquitoes, a program of mosquito eradication was implemented that succeeded in dramatically reducing the cases of this disease. In the Andes in South America there is a disease called Oroya Fever that periodically decimated the population in some localities. In the 1800s many physicians suspected that this disease was connected to another condition that led to the production of skin warts (Peruvian Warts), but no one had ever demonstrated they were connected. Daniel Alcides Carrión, a student of medicine in the capital of Peru, Lima, set out to prove that these two diseases were the same. He removed a wart sample from a patient with the skin condition and inoculated himself with incisions that he made in his arms. Carrión developed the symptoms of Oroya Fever thus demonstrating that these two diseases were different stages of the same disease, which is now called Bartonellosis. Unfortunately, he died from the disease, but he is hailed as a hero in Peru.

The above are but a fraction of the cases of individuals who risked life and limb performing heroic science. Many people criticize the usefulness of most cases of heroic science, especially when it just involves a sample size of “one”, and these critics have a point. In the end, heroic science should be held up to the same standards of rigor as regular science. However, whether those that experimented on themselves did it out of need to overcome bureaucratic obstacles, the belief in the correctness of their ideas, scientific curiosity, or because they were crazy, you always have a certain degree of admiration for the individuals who put their lives and health on the line for a scientific idea. They are willing to cross a line of security that most researchers wouldn’t dare to cross. The Photograph of Barry Marshall by Barjammar is in the public domain. The photograph of Dr. Goldberger made for the Centers for Disease Control and Prevention is in the public domain. The photograph of Max von Pettenkofer is in the public domain in the US. The photograph of Jesse Lazear from the United States National Library of Medicine is in the public domain. A long time ago in a college biology lab far, far away…a fellow student and I performed an experiment to assess how different foodstuffs were handled by the intestine. We were not studying anything new, we were just repeating a classic experiment to examine the effect of the composition of food on the speed of digestion. So we took a few groups of rats and fed them a high-carbohydrate diet or a high-fat diet. We determined how much food the rats had consumed, and we euthanized the rats at different times after ingesting the meal and measured the weight of the contents of the stomach and intestine. We found that the food mostly made up of carbohydrate emptied quickly from the stomach, and there was a small amount of it present in the intestine. However, the food made up of mostly fat emptied slowly from the stomach, but there was more of it in the intestine; so far so good. One of our conclusions was obvious from the results, the fatty food emptied more slowly from the stomach. But in our report on the experiment we went beyond that, and also concluded that the food made up of carbohydrate was absorbed faster into the body compared to the food made up of fat. Later we were furious to find out that our experiment report received the equivalent of a “C”! When we confronted (literally) our professor, he explained that we could not make that conclusion because we had not performed an experiment specifically designed to evaluate the absorption of the food into the body. We were incredulous at this reply. “Where else could it have gone?” we enquired. The professor explained that no matter how obvious, we could not make this claim without presenting evidence. He said that, for example, we could have measured the level of certain fats and carbohydrates in the blood vessels draining the intestines and correlated that with the amount of such nutrients inside the intestine. But absent that evidence, we had made an unwarranted conclusion. Needless to say, we were not too happy with our grade. We had worked really hard to conduct the experiment staying late in the lab preparing the diets and making all the measurements. We grudgingly accepted the professor’s argument, but still we could not shake off the idea that, at heart, the whole notion was just a stupid formalism. After all, ingested food doesn’t just disappear; it has to go somewhere. If it is not in the intestine, where else could it have possibly gone but inside the body? At the time we did not appreciate that the requirement for evidence that the professor was imposing on us, despite repeating a well-known scientific experiment and working with a well-known animal model, was meant to alert us to be cautious when performing experiments for the first time with less known systems. In fact, if we had paid attention during high school, we would have remembered a famous example of one such experiment that reached an erroneous conclusion for not following the cautious approach required by our professor.  In 1662 the chemist Jan Baptist van Helmont conducted what is considered the first quantitative experiment in biology. He took a pot and filled it with 200 pounds of soil, which he had weighed after drying it in a furnace, and planted a willow tree sapling weighing 5 pounds in the pot. For 5 years he watered the tree, and at the end of this time period the tree weighed 169 pounds. Yet when he reweighed the soil after drying it as described above, he only found a 2 ounce difference. This indicated that the entire 164 pounds of the mass of the tree could not have possibly come from the soil. Helmont concluded that the 164 pounds of wood, bark, and roots arose from water. This conclusion at the time (1662) must have made sense. After all, from where else could all that extra mass have possibly originated? The only thing the tree seemingly received was water, thus the difference in weight could only have come from the water, right? Notice that van Helmont did not produce any evidence to support this conclusion. Much in the same way that we concluded (without evidence) that the food was absorbed because it could not have gone anywhere else, van Helmont concluded that the extra tree mass came from the water presumably because it could not come from anywhere else. It would be 100 more years before the work of several scientists including Joseph Priestley, Jan Ingenhousz, Jean Senebier, and Nicolas-Theodore de Saussure would establish that plants take up CO2 from the atmosphere and produce oxygen under the influence of sunlight, and that the gain in weight as a plant grows is not just due to accumulation of water or its conversion into tree material, but to the fixation of CO2 into chemical compounds which make up the solid constituents of the wood, bark, and roots. Ironically, van Helmont was the first to identify a gas produced from burning plants which he called “sylvestre”, and which we now know to be CO2, but he never made the connection that plants may take up this substance from the air. So yes, my dear old professor, you were right. Even the obvious must be supported by evidence! Image of the van Helmont experiment by Lars Ebbersmeyer used here under an Attribution-Share Alike 4.0 International (CC BY-SA 4.0) license. Some scientific theories that are in the way of religious, political, and corporate interests have been getting a bad rap. These theories are claimed to be false by their foes. So for example, creationist claim that evolution is false, climate change deniers claim that global warming is false, and so on. In fact, many people seem to imply that theories are ephemeral, and to buttress their claim they offer a list of theories that have been proven “false”. Why should we rely on a scientific theory to affect public policy today if it can be shown to be false tomorrow? In addressing this issue there are several things we have to consider. Before we begin, we need to make the clarification that the word “theory” in the popular parlance can be a synonym for a guess or a very preliminary explanation. In science, a theory is a vastly more stable form of knowledge. In fact, if the theory is sufficiently developed, it in itself can become a fact. So what are the characteristics of a sufficiently developed theory? They are: 1) It explains the existing observations and experimental results. 2) It has generated predictions that have been found to be true. 3) It has generated practical applications that work. 4) Results from other scientific disciplines corroborate the theory and the theory corroborates results in other scientific disciplines. Please read the list above again carefully. Don’t you think that when a theory fulfils these characteristics we can say with confidence that it has clearly grasped important aspects of the realities it’s trying to explain? But, you may ask, what if a genius like an Einstein comes along and thinks up a new interpretation for everything the theory explains and predicts, and expands it into a different theory to explain new things? Can’t we say then the theory was proven false?  Well, let’s consider what Einstein did. He reinterpreted Newton’s laws of gravitation and motion, and came up with explanations for phenomena the Newtonian interpretations could not explain. Einstein thus relegated Newton’s laws to particular cases where velocities are much lower than that of the speed of light or when very strong gravitational fields are not involved. But here is the thing: the speeds at which planets, rockets, space probes, and objects in everyday life move, and their behavior in the gravitational fields that they encounter most of the time, can be described with a satisfactory level of accuracy by Newton’s laws. The existence of a planet (Neptune) and the return of a comet (Halley’s Comet) were predicted using Newton’s laws. The life of astronauts and the integrity of multimillion dollar space probes depend on the veracity of the calculations employing Newton’s Laws. Is it fair to say that Einstein proved Newton’s theories were false? Of course not! Einstein showed Newton’s theories were incomplete, and this is what the public has to understand when discussing scientific theories. Sufficiently developed scientific theories cannot be false, they can only be incomplete. When assessing scientific theories, it is counterproductive to talk in terms of true or false. What has to be discussed is whether a theory has been formulated at a high enough level of detail, in other words, whether the theory is complete enough. We don’t need theories to be 100% true. They can’t be (nothing can), and they don’t have to be. We only need the theory to be complete enough to be useful for society. Finally, it must be pointed out that the vast majority of scientific theories are not “big name” theories such as the theory of evolution or global warming. There are hundreds of scientific fields and subfields that have given rise to thousands of theories most of which are boring, highly technical, and devoid of importance to the “culture wars”. Therefore they do not make the news, and non-scientists are not even aware of them. Most of these theories have never been overturned, and in fact form the basis of modern science leading to tens of thousands of practical applications and policies. If these theories were not sufficiently complete representations of reality, modern life would not be possible! So next time you are pondering the worthiness of a scientific theory, remember, it's all in the completeness. The figure is a collage of a copy of a painting of Isaac Newton by Sir Godfrey Kneller (1689), which is in the public domain, and a photograph of Albert Einstein by Orren Jack Turner obtained from the Library of Congress, which is also in the public domain because it was published in the United States between 1923 and 1963 and the copyright was not renewed.  Many snippets of wisdom that have permeated our culture are routinely quoted in social media such as the one from the Irish playwright George Bernard Shaw featured in the image above that states that all progress depends on the unreasonable man. Everyone seems to have an affinity with this particular trope. After all, who doesn’t love the story of the little guy fighting against the establishment? It seems that most of us, within reason, are programed to root for the underdog. The mavericks, the misfits, the fringe-thinkers, the outcasts: why do these characters have a place in our hearts? Is it perhaps because in the daily tedium of our lives, as we persevere overburdened by challenges at work, in our homes, and in society, we sometimes wish we could upturn the established order and restart anew? Perhaps we have considered going against the current, challenging the system, rocking the boat, but then deemed the risks of doing so too dire and just bowed our heads and kept on going. So maybe when one of these colorful characters that actually dares to challenge the powers that be comes along, we live vicariously through their plight a fantasy that we ourselves are too cowardly to bring to reality. Be that as it may, in the field of science many of these characters have captivated the public’s imagination. Take the case of Dr. Barry Marshall who proposed the hypothesis that stomach ulcers are not caused by excessive acid secretion due to stress, as was thought by most experts, but by infections with a type of bacteria called Helicobacter pylori. Dr. Marshall failed to convince the scientific establishment. He was not able to develop an animal model of the disease, and could not obtain funds to perform a human experiment. So what did he do? He experimented on himself! He drank a broth infected with the H. pylori isolated from a patient who had developed severe gastritis. Within days he developed the same symptoms the patient had, and he was able to cure himself using antibiotics. It took another decade of struggles, but gastroenterologists were eventually convinced of the truth of his claim, and Dr. Marshall won a Nobel Prize in 2005. Isn’t that a great story? And like this story, there are many other such stories of the unreasonable man battling the system and prevailing in the end. However, the popularization of these stories has generated several notions in the public consciousness that are not accurate. The first is the notion that the only way science makes progress is when one of these characters upends conventional wisdom and triggers a revolution. This is not true. Most of the time progress in science occurs incrementally as thousands of scientists perform vital work within the system developing new knowledge, methodologies, procedures, and applications. The backgrounds and expertise of these scientists are fundamental to driving any new or old area of science forward. Without these individuals working within the system there would be no science. The notion that ALL progress, at least in science, depends on the unreasonable individual is simply false. The second notion is that just because you are one of the unreasonable individuals you must be right, and the scientific establishment must be wrong. It must be understood that for every individual who has challenged the established order successfully, there have been dozens to hundreds of other individuals who have challenged the established order and were proven to be wrong. The stories of these individuals are normally not of interest except, if at all, to those whose write historical descriptions of the development of a given scientific field, and they are barely mentioned in the popular press. Finally, the last (and probably most troublesome) notion is that when the scientific establishment lashes out at one of these unreasonable individuals, this is taken as proof that there is a bias within the scientific community motivated at best by intellectual conformity and closed-mindedness, or at worse by corrupt influences tied to granting agencies or corporate interests. However, what the public may interpret as an unfair treatment of a scientist by the scientific community is more often than not due to the fact that science is a very conservative enterprise, and the bar to overturn or reinterpret established science is set pretty high. Science is biased towards established knowledge; as it should be! When you go against established science, you’d better have some exceptional evidence and arguments or else you are going to be given a very hard time! Even scientists with Ph.Ds. can propose things that are wrong, misguided, or just plain stupid. Not all ideas deserve to be treated equally, not all evidence is sound, and not all interpretations of the data are correct. What most individuals seeking to change the prevailing scientific paradigm do is address the criticism made by their peers, generate more evidence, and reformulate their ideas or their presentation. Convincing other scientists that you are right is the warp and woof of science. However, a disturbing phenomenon has emerged. Today those individuals who have been rebuffed by the scientific community can take their case to “the people” arguing that they are victims of a corrupt scientific establishment that is hell bent on silencing them and discrediting their ideas. Such is the case of Dr. Andrew Wakefield who, when his views that vaccination was linked to autism were rejected by the medical community, took his case directly to the public. He actually succeeded in convincing many parents to avoid vaccinating their children leading to a spike in infant deaths from some diseases that are preventable nowadays. Established science is called that for a reason. Scientific theories are constructs that have grasped important aspects of the realties they seek to explain, and they cannot be overturned on a whim. The quixotic quest of the unreasonable man must not be romanticized. These individuals are wrong most of the time, and established science must be protected from them. If you want to upend established science, the burden of proof is on you! The image of George Bernard Shaw was modified from a photograph in the George Grantham Bain collection at the Library of Congress and has no known copyright restrictions. 2/7/2018 Is the Earth round? Avoiding The Absolute Truth to Find the Practical Truth: the Devil is in the Level of DetailRead NowMost people hold a “binary” notion of the truth. For them things can either be true or false, because that which is false cannot be true, and that which is true cannot be false. We will call this notion the “absolute truth”. I want to argue that this absolute truth notion is unsatisfactory and impractical at addressing the worthiness of scientific theories. For this purpose I will use an example. Consider the idea that the Earth is flat. For people in antiquity living in a flat place like the plains or a desert it probably made sense to think this, but eventually the ancients figured out that the Earth was not flat. The Earth is round and we know that for a fact nowadays. So, the Earth is flat: false, the Earth is round: true; right? Actually, the Earth is not round! As Isaac Newton proposed and was later found to be correct, the Earth, due to its rotation, is an “oblate spheroid”, which means it is flater at the poles and bulging at the equator (the distance from the Earth’s center to sea level is 13 miles longer at the equator). OK, so, the Earth is round: false, the Earth is an oblate spheroid: true, right? Well, not quite. The Earth is an oblate spheroid, but the southern hemisphere is wider than the northern hemisphere giving the Earth a bit of a pear shape. Fine, so the Earth is an oblate spheroid: false, the Earth is a pear-shaped, oblate spheroid: true, right? Actually, even this is false! The Earth’s mass is not distributed evenly across the planet, and the greater the mass, the greater the gravitational force, which leads to the creations of bumps in the Earth’s crust. Additionally these bumps change overtime due to the movement of continental plates, the changing weight of the oceans, lakes, ice masses, and atmosphere, and the gravitational pull of the sun and the moon. All of these processes can deform the Earth’s crust by the order of millimeters to a few dozen centimeters daily and by much larger amounts over geologic times. So the Earth is a bumpy, shapeshifting, pear-shaped, oblate spheroid? Yes. Wahoo, we did it! At last we have the absolute truth! The Earth is an pear-shaped, oblate spheroid: false, the Earth is a bumpy, shapeshifting, pear-shaped, oblate spheroid: true, right? At this point you are probably thinking: seriously, are you kidding?  This is the problem with the absolute truth notion: it ignores the level of detail that is required for adequately describing physical phenomena. The level of detail that is required from a description of the shape of the Earth will vary depending on what you are intending to use it for. For surveyors determining distances in small patches of the Earth’s surface, a flat Earth model is perfectly suitable, as the error in the measurements is negligible. For people dealing with time zones, the round Earth description is perfectly adequate. On the other hand, satellite orbits can be affected by small deviations of the Earth’s crust from a perfect sphere, so people following satellites must take into account the oblate spheroid and pear deformities of the Earth. Similarly, people running particle accelerators must understand that the Earth is constantly shifting its shape so they can keep track of very small deformities in the Earth’s crust that arise daily and may mess up their measurements. The vast majority of people would accept that the claim that the Earth is flat is false (low level of detail). On the other hand, most people would consider further refinements to the round Earth claim such as the oblate spheroid; pear-shaped, oblate spheroid; or bumpy, shapeshifting, per-shaped, oblate spheroid to be a needless amount of precision (too high a level of detail), and rightly so. The type of deviations from a spherical shape that these highly detailed models of the Earth deal with is at most about a dozen miles. If you take into account that the Earth’s radius is 3,959 miles, we are talking about a difference of 0.3%. By this token the Earth’s crust, despite its mountains and sea trenches, is very smooth. So for the use that most of us make of the information regarding the shape of the Earth, a round Earth model is an acceptable level of detail. Depending on what you are trying to explain or achieve, trying to find the absolute truth may be not only impossible or unnecessary, but also cumbersome. So we come to the paradoxical realization that seeking the absolute truth may actually hinder or prevent our discovery of the practical truth! When scientists seek the truth regarding physical phenomena, what they have in mind is the practical truth which is a truth defined at a sufficiently high level of detail to explain the phenomena they are studying and derive predictions and useful applications. Later on other scientists may seek to explain things at a higher level of detail to address more complex questions that a lower detailed truth can’t answer satisfactorily, and so on. What the public has to understand is that a scientific theory does not have to explain everything to be considered “true”. It just has to explain the relevant phenomena at a sufficiently high level of detail to generate accurate predictions and useful applications. The debate should not be about whether a given theory is true or false, but rather about whether a theory has been formulated at a sufficiently high level of detail for society to benefit from it. The image from NASA is in the public domain. The number of animals used in research each year is in the millions and the majority of these are mice. There are various reasons for this including the fact that mice are cheaper than larger animals, they reproduce faster, they are easy to handle, and the physiology and biochemistry of mice has many similarities with that of humans. Mice even develop many diseases that humans develop like cancer and diabetes. These traits have made mice the animal of choice for gene manipulation studies. As a result, many lines of mice which carry genetic alterations have been developed, and these have proven invaluable for studying disease processes in humans. Normally when researchers decide to employ an animal to study human disease they have to evaluate whether the animal models the disease adequately in humans. However, so many mouse disease models have been already established that most researchers go along with the prevalent practice in the field of employing certain models. And this makes sense, right? Why reinvent the wheel? After all, these models have been used before, they have led to reproducible observations, and key discoveries have been made with them. So, what do researchers do? They feed the mice the right diet, provide them with water, bedding etc. for their cages, and perform the experiments according to ethical guidelines. Researchers also make sure that they understand the variables they are dealing with, use the right controls, and execute the experiments in a competent fashion. What else is there to it? What about the temperature at which the mice are housed? Until recently if you asked researchers this seemingly mundane question they would draw a blank. They would probably answer that you keep the mice at room temperature of course. And comfortable room temperatures are around 20-22 °C. You don’t want to be doing experiments in a very warm environment where you are going to be dripping sweat over everything you are doing, and you don’t want to be doing experiments in a very cold environment where you are going to be shivering. Scientists have to make judicious decisions about what variables to control or keep track of in their experiments. You can’t control or keep track of everything. Why should housing temperature be an issue?  Several physiologists over the years have been sounding the alarm regarding the problem of housing mice at room temperatures, but only recently have their voices captured the attention of a critical mass of scientists. The problem is that housing mice at room temperatures of 20-22 °C is indeed comfortable, but for humans! As I have noted before in this blog, mice have a very large surface to volume ratio, therefore heat exits their body very quickly. This is why mice have a metabolism that is 7 times greater than that of a human. Room temperatures of 20-22 °C feel comfortable to us because at these temperatures we achieve thermoneutrality. This means that at these temperatures our bodies spend the least amount of energy in heating or cooling. However, for mice the temperature of thermoneutrality is around 30-32 °C and this is not a theoretical calculation. If a mouse is placed on a thermal gradient, these are the temperatures they seek. Mice housed at 20-22 °C are under a cold stress. So when researchers use mice housed at room temperature to model human disease, what they are really modelling is said disease on cold-stressed humans! Housing mice at human room temperatures dramatically changes their physiology and biochemistry and therefore the way they respond to disease and to drugs against diseases. For example, immunological responses are impaired in mice housed at room temperature which affects the way they respond to cancers and anticancer drugs. Also, mice housed at human room temperatures are more resistant to obesity and diabetes. The problem now is that millions of experiments ranging from basic science to drug development have been performed on mice housed at human room temperatures! So the question arises as to which of these results are valid? Do millions of experiments need to be repeated? Fortunately, the adaptations of animals like mice to a cold environment are well understood, and this knowledge can be used by researchers to estimate how much housing temperatures are likely to affect experimental results and then proceed to repeat any relevant experiments. In fact, this knowledge may present an opportunity to improve mouse models! It has been observed that many drugs that worked in mice did not work on humans beings, and the reason why this is the case is not clear. Could the housing temperature have had anything to do with it? This remains to be determined. Additionally, the right temperature to house mice may depend on what is being studied. Some phenomena involving increased energy expenditure and other related variables may actually be better modelled in mice housed at cold temperatures. Finally, housing at both cold and thermoneutral temperatures may give us a better understanding of how stress interacts with the variables involved in disease in ways that are relevant to patients. For example, mice bearing tumors tend to prefer significantly warmer temperatures (37-38 °C) than healthy mice, indicating that cancer induces a form of cold stress. This issue of the housing temperatures illustrates the importance of bringing experiences from several fields of science to bear on the methodological procedures to be followed. Scientists may be very smart and very competent, but they all have in depth training in their own field of study, and what may seem like an unimportant variable in one field may be recognized as something to keep under control in another. Image by Neil Smith available under a creative commons license: Attribution-NonCommercial-ShareAlike 3.0 Unported (CC BY-NC-SA 3.0). |

Details

Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed