|

1/29/2021 The Myth of the Unprejudiced Scientist and the Three Levels of Protection Against BiasRead Now As modern science was emerging during the 18th and 19th centuries, ideas regarding how science should be performed also began to take shape. One particular idea posited that scientists subject theories to test, but do not allow their biases to get in the way. Real scientists, it was argued, are unprejudiced, and rather than try to prove theories right or wrong, they merely seek the truth based on experiment and observation. According to this notion, real scientists don’t have preconceptions, and they don’t hide, ignore, or disavow evidence that does not fit their own views. Real scientists go where the evidence takes them. Unfortunately, the romantic notion that real scientists operate free of bias proved to be dead wrong. Scientists, even “real scientists”, are human beings, and like all human beings they have biases that influence what they do and how they do it. While a minority of scientists does engage in outright fraud, even honest scientists who claim they are only interested in the truth can unconsciously bias the results of their experiments and observations to support their ideas. In past posts I have already provided notable historical examples of how scientists fooled themselves into believing they had discovered something that wasn’t real such as the cases of the physicist René Blondot, who thought he had discovered a new form of radiation, or the astronomer Percival Lowell who thought he had discovered irrigation channels on Mars.

It is in part because of the above realization, that scientists developed several methodologies to exclude bias and limit the number of possible explanations for the results of experiments and observations such as the use of controls, placebos, and blind and double-blind experimental protocols. This is the first level of protection against bias. Today the use of these procedures in research is pretty much required if scientists expect their ideas to be taken seriously. However, while nowadays scientists use and report using the procedures described above when applicable, the proper implementation of these procedures is still up to the individual scientists, which can lead to biases. This is where the second level of protection against bias comes into play. Scientists try to reproduce each other’s work and build upon the findings of others. If a given scientist reports an important finding, but no one can reproduce it, then the finding is not accepted. But even with these safeguards, there is still a more subtle possible level of bias in scientific research that does not involve unconscious biases or sloppy research procedures carried out by one or a several scientists. This is bias that may occur when all the scientists involved in a field of research accept a certain set of ideas or procedures and exclude other scientists that think or perform research differently. Notice that above I used the words “possible” and “may”. The development of a scientific consensus within the context of a well-developed scientific theory always leads to a unification of the ideas and theories in a given field of science. So far from bias, this unification of ideas may just indicate that science has advanced. But in general, and especially early on in the development of a scientific field, science is best served not when researchers agree with each other but when they disagree. So the third level of protection against bias that keeps science true occurs when researchers disagree and try to prove each other to be wrong. In fact, some funding agencies may even purposefully support scientists that hold views that are contrary to those accepted in a field of research. The theory is that this will lead to spirited debates, new ways of looking at things, and a more thorough evaluation of the thinking and research methods employed by scientists. However, disagreements among scientists do have a downside that is often not appreciated. While science may benefit when in addition to Scientist A, there is a Scientist B researching the exact same thing who disagrees with the ideas and theories of Scientist A, science may not necessarily benefit when Scientist A and B research something and A thinks that B is wrong and doesn’t have the slightest idea of what he/she is doing, while B thinks A is an idiot who doesn’t understand “real science”. There are a number of examples of long-running feuds in science between individual scientists or even whole groups of scientists. In these feuds, the acrimony can become so great that the process of science degenerates into dismissal of valid evidence, screaming matches, insults, and even playing politics to derail the careers of opponents. But, of course, the problem I have described above is nothing more than the human condition and in this sense science is not unique. Every activity where humans are involved has to deal with bias, but at least in science we can recognize we are biased and implement the three levels of protection against this bias. It may be imperfect, it may be messy, it may not always work, but, being human, that’s the best we can do. Image from Alpha Stock Images by Nick Youngson used here under an Attribution-ShareAlike 3.0 Unported (CC BY-SA 3.0) license.

0 Comments

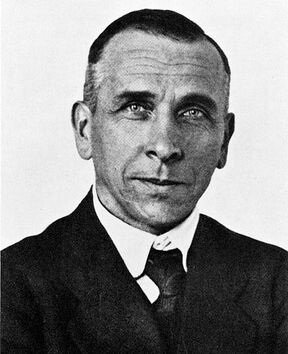

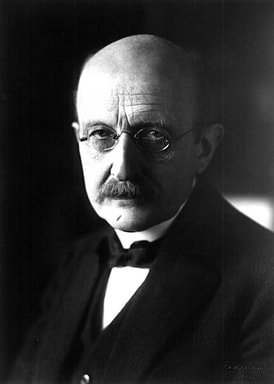

I once attended a science meeting where one of the attendees was proposing the existence of a new metabolic pathway that was not recognized by the prevailing thinking in the field. After he presented the evidence for his pathway, in the next talk one of the other researchers presented the evidence against the existence of such a pathway. This second researcher then concluded his presentation by raising his voice and stating that he considered the proposed new pathway to be: “COMPLETELY IGNORABLE!” while he slammed his hand flat on the lectern once during the word “completely” and again during the word “ignorable”. The above story is not an isolated incident. Many fields of science have had their share of controversy often accompanied by vitriolic long-running feuds among rival scientists that seemingly resorted to using science, not as a method to discover the truth, but rather as a platform to hurl insults. And the situations that stir the most passions arise when conventional wisdom is challenged: the more revolutionary the idea, the more the attacks rain upon its proponents. There are quite a few examples of these occurrences in the history of science, but I want to mention three here. I have already cited the case of the American neurologist and biochemist Stanley Prusiner who in 1982 proposed the existence of an infectious agent made exclusively of proteins (no DNA or RNA), which he christened Prion. He was vilified in public and private by other scientists, but persevered and eventually won the Nobel Prize in 1997.  Dan Shechtman Dan Shechtman Another case is the one of the Israeli scientist Dan Shechtman who discovered a new type of crystal that did not conform to common rules that crystals followed according to the field of crystallography. When he published his findings about these “quasicrystals”, the negative reaction to his discovery was so strong that the leader of his research group asked him to leave the team for bringing disgrace to it. Shechtman was ridiculed and lectured about the basics of crystallography by other scientists, and the famous two-time Nobel Prize winning scientist Linus Pauling famously stated that “there are no quasi-crystals, but quasi-scientists”. Shechtman eventually prevailed and was awarded the Nobel Prize in chemistry in 2011.  Alfred Wegener Alfred Wegener Finally, there was the German meteorologist and geophysicist Alfred Wegener, who proposed in the early twentieth century that the position of continents is not fixed, but rather that they move slowly over geological periods of time (continental drift). He was called “delirious” and a “stranger to the facts”. His theory was labelled “pseudoscience” and a “fairy tale”. New students in geology were advised by their mentors that acceptance of continental drift would hurt their careers. Through it all, Wegener kept refining his theory and answering his critics. Eventually, after several decades during which new discoveries were made regarding the spreading of the sea floor and new disciplines such as paleomagnetism demonstrated the drift of continents, his ideas were accepted and incorporated into the new theory of plate tectonics. Unfortunately, Wegener did not live to see this. He died during an expedition to Greenland in 1930. Why should scientists be so hypercritical? Isn’t science about proposing hypotheses and testing them through observation and experiment? Why should proposing a hypothesis, no matter how far-fetched, be met with virulent skepticism, humiliation, and insults? This has, of course, nothing to do with the scientific method. The reasons for this must reside in human nature. To my knowledge, the exact explanation has not been pinpointed, but I believe there are two phases to this phenomenon. The first phase is the short-term response of a scientist upon being confronted with an idea that upends his or her world view. During this phase it may be understandable that an individual will engage in a “knee-jerk” reaction and latch on to any imperfections in the idea in order to criticize it and its proponent. The second phase is what happens over a longer time frame of weeks, months, or even years after the idea has been proposed. This phase is the more interesting one because the short-term emotions have quieted down and scientists make calculated decisions as to what to do about the idea. The forces governing this second phase have been equated with the perception of how young and old individuals react to change. It is argued that old scientists are more comfortable with established knowledge, especially if they played a role in generating this knowledge. These older individuals may view any proposal which threatens to upend the conventional wisdom with suspicion and skepticism, to the point of using their influence to block funding to perform research into the new idea, impede the publication of research regarding the idea in mainstream journals, or even hinder the academic careers of the proponents. On the other hand, young scientists who are beginning their careers may be more receptive to new ideas and more welcoming to the individuals who propose them.  Max Planck Max Planck The physicist Max Planck once famously remarked that; “A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it.” This notion that scientific progress ultimately depends on the replacement of a new generation of scientists by another, is often humorously phrased as “science advances one funeral at a time”. While there may be some truth to this, I believe this generational replacement notion is not the whole story, and may in fact just reflect the difficulty in carrying out confirmatory experiments or observations. The thing at the heart of scientific progress is the ability to replicate experimental results or observations, or test an idea. If replicating a controversial experiment or observation, or testing an idea, is something that can be accomplished by one lab in a short time within current budgets, acceptance of a new idea may occur relatively quickly. This happened in the case of Shechtman, and also, although more slowly, in the case of Prusiner. Other scientists, both young and old, tried to replicate the experiments of Shechtman and Prusiner and indeed obtained the same results. On the other hand, if replicating experimental results or observations, or testing an idea, requires expensive studies that take many years and involve coordination between several laboratories and travelling around the globe, or the development of new experimental tools or even new scientific disciplines, the process may be much slower, especially after you factor in the resolution of any technical problems that may arise. This happened in Wegener’s case During these longer time frames, you may have a significant dying out of old scientists and their replacement with a younger generation. If the new ideas are finally accepted, the long time frame that it took for this to happen may give rise to the notion that the idea was accepted only because the old scientists died, which may not the case. Be that as it may, the scientific establishment clearly sets a very high bar for new ideas to be accepted. Proposing an idea that runs contrary to the established order is not for the faint of heart. But it may just happen that if you can put up with the insults, in the end you may persevere after enough funerals! Photograph of Dan Shechtman by Holger Motzkau used here under an Attribution-ShareAlike 3.0 Unported (CC BY-SA 3.0) license. The Wegener photo from the Bildarchiv Foto Marburg and the Max Planck photo by Transocean (Photographic company, Berlin) are in the public domain. |

Details

Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed