|

The general public believes that successful scientists are those who discover something important or propose a new theory that explains things that no one could explain before. However, this belief leaves out a critical detail. How is it decided whether the discovery is valid or the theory is right? After all, maybe the scientists made a mistake in the observations and/or experiments that the discovery is based upon, or maybe the scientists missed some crucial details when formulating the theory. Who decides if this is the case? The answer is: their peers. The work of scientists is evaluated by their peers. These are scientists who are also experts in the field. Successful scientists are not just those who make discoveries and propose new theories. Successful scientists are those who convince their peers that the discoveries they made are valid and that the theories they proposed are right. The peers of a scientist are what constitute the most immediate branch of the so-called “scientific establishment”. Success in science is convincing the scientific establishment that you are right. In this sense the scientific establishment is the keeper of the virtue of science. If you want to get your ideas accepted and the old ideas thrown out, you have to convince other scientists that you are right. Many of these scientists are going to evaluate your ideas and even try to reproduce your observations and/or experiments, and if they don’t think your ideas make sense, or they don’t obtain the same results, or make the same observations, you will get nowhere. And the more revolutionary your ideas are, the harder it is to convince the scientific establishment. This is because science is very conservative and the bar to overturn established scientific knowledge is set quite high. Because of the above, the scientific establishment sometimes rejects new ideas that are true. I have already mentioned the cases of Carlos Finaly who proposed that mosquitoes transmitted Yellow Fever, Alfred Wegener who proposed that that continents move (Continental Drift), and of Stanley Prusiner who proposed the existence of a new infectious agent (Prion) made up only of protein. These scientists had to persevere for a long time against resistance and often outright hostility from their peers to get their ideas accepted. However, for every visionary that is given a hard time by their peers and nevertheless succeeds, the scientific establishment rejects hundreds of others that most of the time are just individuals who propose an idea that turns out to be wrong and even sometimes individuals who turn out to be frauds. When a scientist is rejected by the scientific establishment what happens next depends on the individual scientist. Some scientists are unconvinced by the objections to their ideas and keep on fighting to get accepted. Others admit that their ideas were not so great after all and move on to developing other ideas. Still another group of scientists may quit research altogether and go into other areas where their scientific training allows them to make a living. Nevertheless, all these scientists share one characteristic. They are all willing to be judged by their peers. They understand that their success relies in convincing other scientists that they are right. All these scientists, whether they make it in science or not, accept the role of the scientific establishment as the ultimate arbiter of what is accepted or not.  Taking Your Case to "The People" Taking Your Case to "The People" However, a new option has opened up for scientists in modern times, and that is taking your case to “The People”. This option works best if your particular area of research has captivated the attention of the public, and especially if it has become politicized. The individuals that exploit this option claim that the scientific establishment is beholden to powerful interests, and because their ideas go against those interests, they are being unfairly attacked and rejected by their peers. These scientists normally peddle their grievances to segments of the population that for one reason or another are opposed to the scientific establishment. The beauty of this approach is that, 1) the general public is not qualified to judge the quality of a scientist’s ideas in a complex field of research, and 2) you will always find a lot of goodwill among people if you are perceived to be battling their favorite boogeyman. If the scientists taking their case to "The People" are savvy in public relations and communication, they can develop a large following of individuals who will attend their lectures, buy their books and other products, and even make donations to promote their cause. Scientists that are successful in taking their case to "The People" have bypassed the checks and balances of science and are free to promote any idea regardless of its scientific validity. Far from this being an innocuous activity, capturing the imagination of people with wrong or unproven ideas can have dire consequences. For example, I have mentioned the case of the scientist Peter Duesberg who back in the 1980s opposed the finding that the HIV virus causes the disease AIDS, and that antiretroviral drugs were required to treat AIDS patients. Duesberg developed a very vocal following of scientists and non-scientists who were dubbed the AIDS denialists. This group and their ideas was shunned by the scientific establishment, but they succeeded in convincing many people among which was the South African president Thabo Mbeki who delayed the introduction of anti-AIDS drugs into South Africa leading to hundreds of thousands of preventable deaths. Another example I have mentioned is the case of the British scientist Andrew Wakefield who in 1998 published an article where he alleged a link between the measles, mumps, rubella (MMR) vaccine and autism. The article was widely publicized by the media, and many parents concerned about the issue refused to vaccinate their children leading to an increase in the rates of the diseases and several deaths. It was eventually found that Wakefield had modified the patients’ medical histories and the article was retracted due to scientific misconduct. Numerous studies have not found any links between autism and vaccines. Wakefield lost his license to practice medicine in the United Kingdom, and moved to the US where he acquired a large following and helped spawn the modern antivaccination movement which has created the dangerous situation of vaccine hesitancy. So be highly skeptical of scientists that take their case to “The People”. These individuals have been rejected by their peers for a reason. It is very likely that their ideas are wrong. If you follow them, you do so at your own peril. The image, which is not related to the topic of this post, is a free download from pixy.org, and is in the public domain.

2 Comments

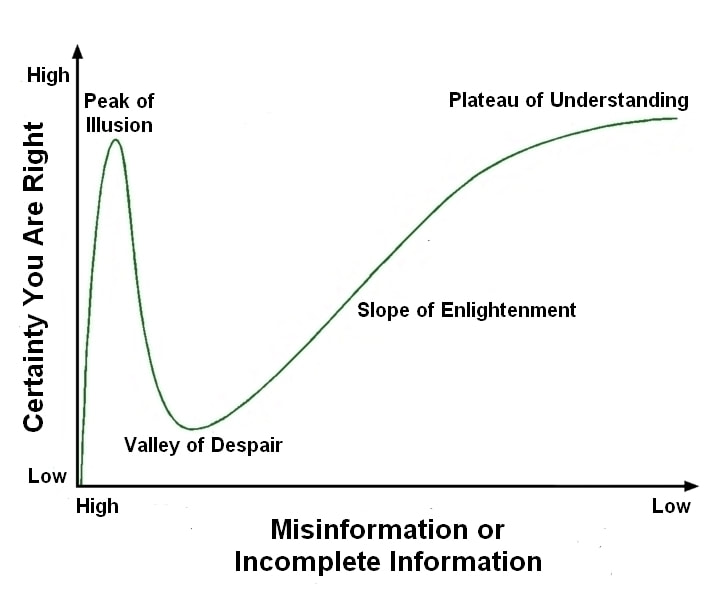

2/20/2021 An Important Challenge for Our Time: Helping People Descend From the Peak of IllusionRead NowI have often written in my blog about conspiracy theories and those who believe in them. Among the conspiracy theory believers I have mentioned are global warming deniers, as well as antivaccination advocates, creationists, and flat Earth proponents. The newest addition to this list is the COVID-19 severity and mitigation deniers. In past posts, I have addressed some of these conspiracies, explained why they are wrong and delved into why people believe in them. What I want to do in this post is present my thoughts regarding the similarities and differences in intellectual processes of conspiracy theorists and those of scientists. In doing so I will use as an aid the graph depicted below which is borrowed from an apocryphal interpretation of the Dunning-Kruger Effect (which may or may not exist, but that’s another story) and bears some resemblance to cultural adaptation curves. I don’t claim this graph to be true. I am just employing it to present my view regarding how I think certainty is related to misinformation or incomplete information. As I have written before, conspiracy theorists dismiss the experience and knowledge of mainstream experts because they consider them to be biased, and only accept input from people that go against the consensus in their fields. Conspiracy theorists believe that even with no scientific training they are as competent as the experts to read the scientific literature and figure things out. Invariably these individuals end up adopting an understanding of reality based on high levels of misinformation or incomplete information that merely reflects their biases. In the graph above this is indicated by the green curve that starts at the lower left hand corner and quickly shoots up to what I call the “Peak of Illusion”. The individuals atop this peak have developed a high certainty they are right based on misinformation or incomplete information. The peak of illusion is a really cool place to be. Everything makes sense and there is no confusion or doubt. I know, because I have been there a few times (see below). Notice, however, that there is not a lot of room atop the peak of illusion. If the conspiracy theory believers start questioning the misinformation they have accepted or start accepting new information that contradicts previous notions, the certainty that they are right will begin to decrease, and this process will take them tumbling down to the Valley of Despair. The valley of despair is a gloomy place of uncertainty full of “ifs”, “buts” and “maybes” where it is not clear what is real, what isn’t, or what to do next. Most conspiracy theorists are stuck in the peak of illusion and avoid the valley of despair like the plague. Why is this? I think that one reason why conspiracy theory believers are marooned atop the peak of illusion is that for many people even the illusion of certainty is preferable to uncertainty. Another reason may be that some conspiracy theories have religious undertones that link the faith of the believer to the conspiracy. Thus they may think that questioning the conspiracy is tantamount to questioning their faith. Yet another reason may be that some people find a sense of community in belonging to a group of fellow conspiracy theory believers, who in turn exert peer pressure on anyone questioning the belief in the conspiracy. Conspiracy theory believers may also be so emotionally invested in the conspiracy that they are subconsciously ashamed to admit they are wrong, and any criticism may make them lash out and double down on their beliefs. Be it as it may, many of the traits that believers in conspiracy theories exhibit are also shared by other people including scientists. Scientists are human. They make mistakes; they get led astray by wrong data, or they may fall in love with beautiful ideas that turn out to be wrong. The vast majority of scientists (including me) have been to the peak of illusion several times during their careers. They know what it is to be confident that they have found the answers just to have their ideas blow up in their faces. So why aren’t most scientists stuck on the peak of illusion? The answer is a combination of training and experience. Scientists have been trained in the procedures to evaluate the veracity of ideas and interpretations. They accept the importance of controls, placebos, randomization, and blind experimental protocols. They try to reproduce experiments and observations, and they engage each other in critical discussions. Scientists also have been wrong many times, and they have learned to identify the personal frailties that have led them to be wrong. Thus, by virtue of their training and experience, most (but not all) scientists have learned to recognize the misleading siren song of certainty emanating from the peak of illusion and are naturally skeptical. Ideas must be tested, protocols must be followed, and facts must reflect reality. Self-doubt is essential in science. Thus, in terms of the graph, the main distinction between conspiracy believers and scientists is that the majority of scientists SEEK the valley of despair. This is because they know that only within the confines of this bleak vale of confusion lies the path up the slope of enlightenment to the plateau of understanding. Granted, this path is not always clear. Progress in science more often than not is a meandering process replete with fits and starts, blind alleys, dead ends, and returning to square one. And what is not shown in the graph is that many paths leading away from the valley of despair can potentially lead back to the peak of illusion. However, as the scientific process moves forward and ideas are tested, accepted, or discarded, sufficiently developed theories emerge that explain the facts, useful applications are generated, and a consensus is reached that an understanding of reality has been achieved at a sufficiently high level of detail. It is only then that most scientists become certain that they are right. And this certainty is not illusory, because it firmly rooted in reality. Therefore, when we scientists and others who accept science and its methods stand atop the plateau of understanding, look across that vast chasm, and see those people stuck on the peak of illusion denying evolution, global warming, the round Earth, the need for vaccination, or COVID-19 severity and mitigation, we wonder, how can we help them? How can we get them down from there and over to our side? This is a topic that I will address in other posts, but I believe that helping these people transition from believing in these conspiracies to accepting reality is an important challenge for our time. The graph by Sciencia58 was modified and is used here under a CC0 1.0 Universal (CC0 1.0) Public Domain Dedication license.  Childbirth at Home in the 16th Century Childbirth at Home in the 16th Century The story goes like this. For centuries women gave birth at home alone or aided by other women. The late 18th and early 19th centuries saw the appearance of the so-called lying-in hospitals which began replacing home births with births at a centralized location aided by individuals trained in childbirth practices. While this change worked well initially, a terrible problem began to develop in some of these hospitals: a rise in the number of cases of puerperal fever. Puerperal fever or childbed fever was a disease that struck women after delivery. Its most common symptoms were high fevers, intense abdominal pain, and foul-smelling vaginal discharge. Although this disease was often fatal, among women who gave birth at home the fatality rate due to puerperal fever was 1% or less, whereas in some of the lying-in hospitals the fatality rate was 5-20%. Some physicians suspected that cleanliness had something to do with the disease, but none of the cleaning procedures they implemented worked. Ignaz Semmelweis was a physician appointed to serve as the head doctor in an obstetrics clinic in Vienna in 1847. The clinic had a high rate of mortality due to puerperal fever. He suspected that the disease had to do with the fact that the same doctors and students who performed examinations on pregnant women and assisted with childbirth (which included him) also performed dissections on cadavers as part of their work and studies. Although these people would wash their hands with soap and water after dissection, the smell of the corpses lingered in their hands afterwards. Semmelweis suspected that “cadaveric particles” which were transmitted from the corpses to the women by people performing dissections were to blame for the disease. Against stiff resistance, he instituted a protocol where he required anyone entering the maternity ward to clean their hands with a chlorine solution, which he found eliminated the smell from dissecting corpses. As a result of the hand-washing protocol, the rate of puerperal fever in the clinic started decreasing. Semmelweis further refined his idea to include not only transmission from corpses to patients, but transmission from sick patients to healthy ones, and instead of “cadaverous particles” he considered the origin of the disease to be any form of “decaying organic matter”. Against more opposition, he required anyone examining pregnant women to wash their hands between patients, and he succeeded in lowering the rate of puerperal fever to that of women giving birth at home. Semmelweis’s discovery was a triumph of science, and you would have expected that he would have been hailed a hero by his peers, but just the opposite happened. When he communicated his findings to the scientific community, the majority of other physicians in Europe rejected his theories and methods. He was not reappointed to his post in the clinic in Vienna and had to leave. After he left, the hand washing was discontinued and the high death toll due to puerperal fever returned. Semmelweis went on to work in the obstetric ward of a clinic in the city of Budapest where once again by instituting hand washing he was able to bring down the rate of puerperal fever. He continued trying to get his ideas accepted but met with little approval. Around 1861, Semmelweis began to develop a form of dementia and eventually had to be institutionalized. He died in an insane asylum in 1865.  Statue of Semmelweis Statue of Semmelweis It was many years later with the research of scientists such as Pasteur that the role that bacteria played in infection was understood. This led other scientists such as Lister to introduce methods of cleaning the hands and instruments of surgeons (antisepsis) to avoid infection, and these methods were then widely applied in the 1880s to maternity hospitals in Europe leading to a dramatic decline in puerperal fever. The research of Semmelweis was rediscovered in 1887, and this led to a revival of interest in his life and work. Today he is hailed as a hero and martyr. There are universities named after him, and his statues can be found in many medical institutions through the world. His valiant fight against an obtuse scientific establishment unwilling to part with old ideas has been told and analyzed in numerous books and articles. Or so the story goes, but reality is more complex. Semmelweis was a difficult man who did not deal well with rejection, and was incensed that his ideas were not accepted. He could be intolerant and dogmatic, insulting those that dared to criticize him, and he wrote angry letters to the press railing against his detractors in an egotistical and bellicose style. These character traits generated him a lot of ill-will and many enemies. Although Semmelweis published editorial accounts of his work and wrote letters to other physicians, he did not publish a formal description of his results as scientists did in those days. In the meantime, scientific descriptions of his work were mostly divulged in second or third hand accounts by students and other supporters, leading to some confusion and misinterpretations regarding the finer points of his ideas. When he did publish his results in 1861, his book was not very well written, being long, repetitive, and difficult to read, not to mention that it also contained vicious attacks against some of his critics. Additionally, at the time that Semmelweis tried to convince the medical world of his findings, there were dozens of theories that purported to explain puerperal fever, and many were championed by important and powerful personalities. Semmelweis’s theory also had several problems. For example, his emphasis on “cadaveric particles” or “decaying organic matter” as the only cause of puerperal fever could not explain why some women contracted the disease without seemingly being exposed to any of these. Finally, Semmelweis essentially claimed that doctors, by not washing their hands properly, were killing their patients, and he called many of his critics "murderers". Although this was true, it was also a very inflammatory accusation that tended to elicit an emotional rather than a rational response. There is no denying that Semmelweis made an important discovery that if widely accepted could have saved many lives, but it is painfully obvious nowadays that he torpedoed the acceptance of his ideas by his lack of tolerance and diplomacy, as well as his delay in publishing his findings and his poor writing style. His story highlights an key aspect of science that needs to be recognized by scientists that have made an important discovery that contradicts current scientific thinking. It is naïve not to take into account the complexities of human nature even in purportedly rational enterprises such as science. Evidence will not always speak for itself. To whom the evidence is presented, by whom it is presented, when it is presented, where it is presented, and how it is presented, are just as important as the nature of the evidence itself. It is not enough to be right. Engraving of three midwives attending to a pregnant woman by Jakob Rueff from the National Library of Medicine is in the public domain. Photograph by Alajos Stróbl of a marble statue of Ignaz Semmelweis in front of Szent Rókus Hospital in Budapest is from the Welcome Collection and is used here under an Attribution 4.0 International (CC BY 4.0) license. Literature consulted for this post: 1) Ignaz Phillip Semmelweis' studies of death in childbirth 2) Ignac Semmelweis (1818–1865) of Budapest and the prevention of puerperal fever 3) Ignaz Semmelweis: “The Savior of Mothers” On the 200th Anniversary of the Birth 4) Medicine in stamps-Ignaz Semmelweis and Puerperal Fever 5) Semmelweis and the aetiology of puerperal sepsis 160 years on: an historical review  Although I don’t normally deal with politics in my blog, I do deal with conspiracy theories and how scientists determine the truth. In that sense I have written posts about the 2020 election addressing both the election conspiracy and the methodology employed to investigate fraud in the election. In this post I want to address the issue regarding the nature, significance, and validity of affidavits presented by the Trump campaign as evidence of fraud. One of the often repeated claims in the 2020 election was that Mr. Trump’s lawyers had hundreds of affidavits that indicated the existence of widespread voter fraud. An affidavit is a sworn written testimony by an individual that states that what the individual is telling is the truth. An affidavit is made under penalty of perjury, which means that if it is determined that the individual lied in their testimony they can be exposed to legal consequences. Many people take this to mean that Mr. Trump had strong evidence that voter fraud took place. After all, these witnesses were willing to sign these documents and face the consequences if their testimonies were shown to be false. However, this is not how an affidavit works. An affidavit merely certifies that a person considers that their assessment of reality is true. An affidavit does not rule out the possibility that the particular perception of reality that the person describes is wrong; it merely states that they consider it to be true. And you cannot prosecute people for believing honestly that something false is true. You have to demonstrate that there was intent to deceive, and in the majority of cases this is very difficult to prove. So the “sworn and signed under penalty of perjury” argument for the validity of affidavits is an exaggeration.

But, what about the claims that were made in these documents? It is not my intention to go over these claims in detail, as others have already done that. A proper affidavit should just stick to the facts and avoid opinions, hearsay, describing the views of others, and unfounded beliefs. Unfortunately, most of the claims behind the affidavits provided by Mr. Trump’s lawyers were assertions or beliefs that some things had happened, combined with conjectures about possible sinister motives behind these things. Other claims were merely things such as mean looks or rude remarks people had made at claimants, or suspicious things they had seen or heard. The majority of these claims were the product of hearsay, guesses, and speculations combined with ignorance by the claimants of the vote counting process or the voting dynamics. The claims in these affidavits did not hold up under scrutiny and they were dismissed by judges (several of them selected by Mr. Trump) as inadmissible or not credible. However, I want to point out a much broader issue regarding affidavits. Science has long known that people who are looking for something will tend to find it, even if there is nothing to be found. This is encapsulated in the dictum: “expectation influences perception.” Scientists also know that when an individual is exposed to a certain stimulus, this can influence the individual’s response to a subsequent stimulus. This is a process called “priming”. If, for example, you release the news that a mountain lion is loose in the city (even if this is not true), you deliver a primary stimulus that creates an expectation. If you then provide a telephone number to call in case somebody sights the animal, that phone will be ringing all day. When people thus primed are exposed to the normal stimuli that they encounter day to day as they go about their business, a significant number of them will reinterpret these stimuli to indicate that they have seen the mountain lion. Based on the foregoing, it is noteworthy that Mr. Trump repeatedly raised the possibility of voter fraud in the months leading up to the election and mentioned it regularly during the process of counting of the votes and afterwards. In view of his extensive social media presence at the time, this could have had a large priming effect on his supporters that raised expectations about voter fraud, and could have led them to interpret any glitches in the system, clerical errors, interactions with other poll workers and observers, and even routine election procedures that they were not familiar with, in the worst possible light. If you are primed to find fraud, especially if the person you voted for lost or is is losing, you will find it. It is partly because of the above that in this case affidavits alone are not reliable evidence. There has to be additional supporting evidence of good quality that buttresses what the affidavits allege is true. In the case of the 2020 election court cases that were dismissed on their merits, the courts considered that no such evidence was provided to them. Image by Nick Youngson from Picpedia is used here under an Attribution-ShareAlike 3.0 Unported (CC BY-SA 3.0) license. |

Details

Categories

All

Archives

June 2024

|

RSS Feed

RSS Feed