Isolated Tribe in the Amazon Isolated Tribe in the Amazon I have written that science can replace magical thinking, superstition, or erroneous ideas or beliefs by ever more refined and focused views of reality though observation and experiment. And this is essentially true. Science has done away with many beliefs and ideas that were not backed by facts. However, these changes rarely happen overnight, and in fact they are often met with stiff opposition. A significant number of people won’t modify their thinking based merely on piles of scientific evidence. If one of the purposes of performing science is to generate knowledge that will help people, then scientists have to take the beliefs and cultural norms of societies into account when pursuing the application of scientific knowledge. To illustrate this, let me tell you a story. A long time ago a physician friend of mine was working in the Amazon jungle. He was tasked with helping the local natives with their medical needs. At the time, an outbreak of malaria was decimating some of the local tribes. My friend told me the story of how he had traveled by boat up a river for several days and then hiked through the jungle to reach a particularly remote tribe. He contacted the tribe’s healer and explained to him that he had some medicine that could help protect the tribe against malaria, but that it was not strong enough by itself, so he needed the help of the healer. He explained that if they combined his medicine with the healer’s powers, they would be able to beat the malaria scourge that was affecting the tribe. So my friend proceeded to treat all the members of the tribe and the healer proceeded to make his potions and perform his dances and rituals, and all the individuals in the tribe affected with malaria were cured. On hearing this, I was astonished. Did my friend really think that the superstitious rituals and brews concocted by the tribe’s healer contributed or were needed at all to cure the malaria?

Now, let me be clear on two things. First, I agree that indigenous peoples throughout the world have developed a rich and effective arsenal of products derived from plants and animals in their environment to treat different ailments and conditions. Second, I also agree that in diseases that are self-terminating (i.e. those from which most people recover) the right psychological frame of mind can go a long way towards making individuals recover faster from their ailment. Even if a treatment is not really effective in curing a person, merely believing it is can make a difference in terms of how fast a person recovers their health. However, when it comes to certain extreme diseases, both indigenous medicine and psychology have limitations, and they cannot compete with medicines designed through evidence-based science. When I questioned my friend about these matters, he agreed with me that the healer’s traditional methods were not effective against malaria, but then he stated that that was not the issue. He explained that in tribes like the one he visited, the healer is a central figure in the hierarchy of the tribe. In the eyes of his fellow tribe members, the healer is so important in the role of protecting the tribe from dangers both real and imagined, that a healer who is perceived as ineffectual can deeply affect the psychology of the tribe and impair the way the tribe faces difficult challenges. My friend said that if he had barged right in and cured everyone, he would have delegitimized the healer in the eyes of the tribe and done a greater damage to the tribe than malaria. This is why he concocted the story about the need to combine both treatments. I was a bit shook up by this. I understood that from a practical point of view this approach made sense, but I remained ambivalent. I asked him, what about truth, facts, evidence, and reality? My friend replied that if enough people believe something no matter how preposterous, that belief for all practical purposes becomes a reality that you have to deal with if you are interested in helping out. If you go head on against these beliefs and disavow or belittle them, you will do more harm than good. I have thought about what my friend said over the years, and I believe it has some truth. People have deeply held beliefs that are often very important to them. From a scientific point of view, I may understand that some of these beliefs can be demonstrated to be false such as, for example, the belief in creationism, but I have to understand that the mere generation of more data and its repetition will not sway minds. And I think that this is a concept that should be applied (and is actually being applied) to the opposition against many of the initiatives that we need to implement today such as dealing with global warming or dealing with an increasing number of unvaccinated children. This is especially true in our current polarized environment, where scientists are portrayed by many with vested interests either as atheistic, liberal, socialist individuals who want the government to take over the lives of regular folk, or as individuals beholden to corporate interests who deliberately hide, falsify, or mischaracterize data. The success of the strategy I outlined above will depend on the approach. Very conservative and religious people will be suspicious of scientists warning them of how, unless we change our behavior, we will harm the planet. However, they may be more receptive if the focus is on the concept that humanity is the steward of creation; that we should take care of what God has created. This approach will be even more effective if it is implemented by individuals who share their own beliefs. A similar approach is also needed with people who are hesitant to vaccinate their children because they believe that vaccines cause autism. Many of these people have been swayed by stories of human suffering interpreted within the context of false or simplistic alarmist explanations. Data and facts are important in combating these false or misleading narratives, but the human side of the issue has to be addressed if scientists wish to change some minds. Scientists should acknowledge the parent’s fears and stress that the common goal of everyone is to protect children, and explain that’s why scientists vaccinate their own children. They should talk about the millions of people alive today because of vaccines, about how the world was when smallpox, diphtheria, pertussis, tetanus, polio, measles, mumps, rubella, and other diseases were prevalent in our societies. Again, these arguments will be more convincing if delivered by former vaccine opponents. The human mind is very complex. Different people perceive the same reality in different ways determined by genes, experience, and culture. Some of these perceptions will not conform to the actual veridical reality that’s out there, but as explained above, this in itself constitutes a reality that must be taken into account if we truly want science to help humanity. Whether it is helping a tribe in the Amazon or getting people to go green or to vaccinate their children, science cannot operate in a vacuum. Photo by Agência de Notícias do Acre used here under a Creative Commons Attribution 2.0 Generic license.

0 Comments

George Washington's Dentures George Washington's Dentures I recently visited the National Museum of Dentistry in Baltimore. One of their valued exhibits is the dentures of the first president of the United States, George Washington. These dentures were made out of ivory (not wood as in the popular myth) and were quite cumbersome to wear. These dentures also reflected a sad reality. In Washington’s time, most people who lived to old age had lost a significant number of their teeth. This tooth loss process, all the accompanying misery and pain associated with it, and the risks of infection and death associated with tooth extraction in those days before antibiotics an anesthesia, was made much worse by the introduction of refined sugar into Europe and North America. But I’m getting ahead of myself. What did the best minds of antiquity consider to be responsible for tooth decay? The two objects below are also at the National Museum of Dentistry. The objects are a replica of a French sculpture from the 1700s. They depict two halves of a tooth. The left half fits into the right half to reproduce the full tooth. Inside the right half there is a ghastly rendition of the fires of hell with human beings being cast into the flames. This makes a reference to the sufferings experienced by people with toothaches. The left half depicts a scene where a man is devoured by a tooth worm.  The Tooth Worm The Tooth Worm Now, what exactly is a "tooth worm"? The tooth worm was an explanation for why people experienced toothaches! It was believed that this worm appeared inside the tooth or made its way into the tooth and then caused the pain. References to the tooth worm go back thousands of years, and treatments for dental pain included strategies ranging from the chemical to the magical to coax the tooth worm out or make it go dormant. Many claimed to have found tooth worms during the process of tooth extraction, but such occurrences either had to do with regular worms that lived as parasites in the gums of individuals, or with normal structures such as tooth nerves that were mistaken for worms. The tooth worm explanation, although widespread, never led to any effective treatments or strategies for preventing toothaches or tooth decay. As science came of age, the thinking and procedures of the scientific method began to be applied to figuring out what was going on with people’s teeth. Below I present an abbreviated timeline taken from a great article by Ruby and coworkers published in the International Journal of Dentistry in 2010.

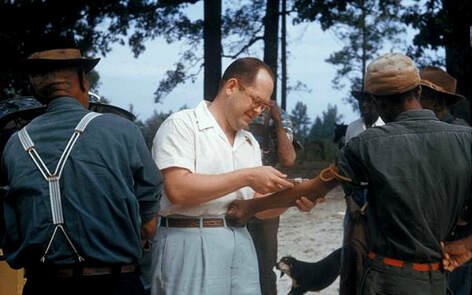

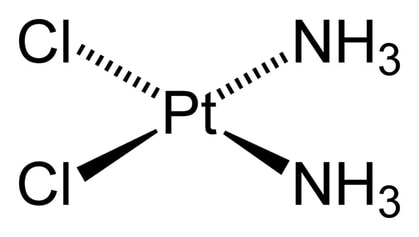

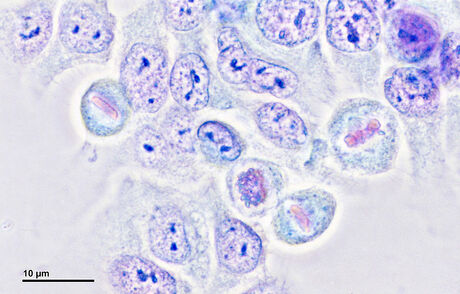

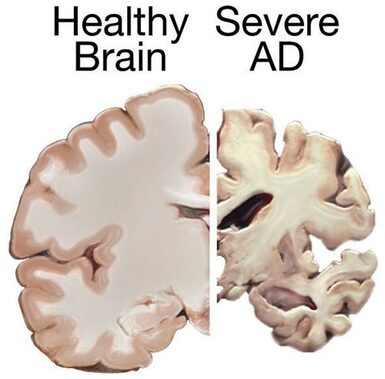

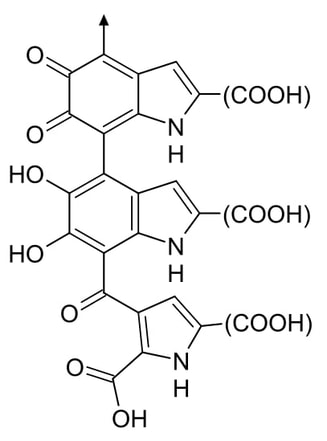

In the year 1700 the Dutch scientist, Antonie van Leeuwenhoek (who invented the microscope), was sent some alleged tooth worms for analysis. He found that these worms were no different from those found in cheese, and he also isolated these worms from the mouth of people who ate said cheese. Leeuwenhoek also took samples from decayed teeth and observed they had tiny living things that he called “animalcules” (little animals), and which we now know to be bacteria. Pierre Fauchard (considered the father of mother dentistry), carried out extensive studies of diseased teeth in 1728, finding no worms, and suggested that sugar consumption enhanced tooth decay. It became clear to many scientists that there was no such thing as a tooth worm. But then, what caused tooth decay? A series of hypotheses (some nearly as fanciful as the tooth worm) were proposed from “temperature changes”, “imbalances”, and “internal factors”, to “heredity”. Several scientists in the first half of the 19th century observed that tooth decay started from the outside and proposed that it was caused by external chemical agents. Later in 1876, Pasteur demonstrated that fermentation of sugars was a chemical process caused by microorganisms. With the realization that many bacteria ferment sugars to acids, scientist like Greene Vardiman Black and Willoughby D. Miller in the late 19th century assembled the final theory that posited that bacteria ferment sugars and produce acid which erodes the dental surface producing cavities. Further work confirmed this theory and expanded it to refer to specific dental sites (plaque) and adapted it to the concept that tooth decay is an infectious and transmissible disease involving specific strains of bacteria. The understanding of the true nature of tooth decay, along with other advances, allowed the development of both preventive and restorative practices that revolutionized dental care worldwide. Today in countries like the United States, any person who follows the recommended guidelines of oral hygiene and has regular checkups performed by a dentist will experience but a tiny fraction of the dental problems suffered by our ancestors a few hundred years ago. This is the way science works. Magical thinking, superstitions, or erroneous ideas like the concept of a tooth worm that do not contribute to solving our problems fall by the wayside, and are replaced by ever more refined and focused views based on grasping aspects of reality though observation and experiment. The photographs are by the author and may be used with permission.  Nazi Doctors trial in Nuremberg 1946–1947 Nazi Doctors trial in Nuremberg 1946–1947 Science is the best method we have to find the truth about the behavior of matter and energy in the world around us. As opposed to other alternatives that seek to discover the truth about the universe and generate applications, science works, and that is a fact. This, however, creates a problem. Despite its achievements, it must always be remembered that one of the greatest limitations of science is that concepts like “good or bad”, “moral or immoral”, or “ethical or unethical” are alien to it because they depend upon value systems. Not only is science unable to answer some of the most pressing questions we have about the meaning of our existence and how to live our lives, but the scientific method does not have any inbuilt requirement to follow ethical or moral procedures when answering questions. Science is just merely a tool, no different from an ax, and an ax can be used to build or it can be used to kill. And this leads us to scientists. Due to the fact that scientists are the wielders of a tool (science) that actually works, they are sometimes sought out and recruited by unscrupulous people, organizations, corporations, and governments to carry out research that may be questionable in nature or downright unethical or immoral. This is because these entities know that given enough time and resources, scientists will produce results. And, despite their smarts and their academic degrees, scientists are as human as any person in the street. Most scientists are good, moral, and ethical people, or at least they try to be, but a few are not. It is important to understand this because performing research following the scientific method may make you a more rational and thinking person, but it does not necessarily make you a good, moral, or ethical person. I have previously detailed how many scientists seeking to advance their careers or simply gain a measure of stability, game the system in ways that are mostly benign, but some scientists engage in practices that are outright fraudulent such as forging data. However, nowhere are the consequences of the ethical lapses of scientists more serious than in fields of science involving human experimentation. Horrific human experimentation was carried out by scientists of the Nazi regime in Germany during World War II. From exposure to disease and chemical gases to forced sterilization and limb transplantations, these experiments were detailed during the Nuremberg Trials, and they have become the epitome of evil. However, these experiments pale in comparison with the atrocities carried out by Japan’s Imperial Army and specifically by an infamous branch called Unit 731, mostly on Chinese nationals during the war. Tens of thousands of civilians were exposed to chemical and infectious agents and many subjected to other tests and sometimes dissected alive in some of the most gruesome experiments ever carried out in the annals of infamy. Examples of other nations that engaged in ghastly human experimentation include the Soviet Union, which carried out experiments where they applied several poisons to the inmates in the Russian Gulag prisons, and North Korea where human experimentation in concentration camps is still ongoing according to defectors.  Participants in the Tuskegee Syphilis Study Participants in the Tuskegee Syphilis Study What led scientists to participate in the heinous experiments outlined above? It can be argued that these countries were or are dictatorships, and scientists were either brainwashed or coerced into these activities. However, this ignores that despicable cases of human experimentation have also occurred in or been sponsored by democracies such as the United States. A case in point is the infamous Tuskegee study which began in 1932 and lasted for more than 40 years. In this study, carried out by the U.S. Public Health Service in cooperation with the Tuskegee Institute (now Tuskegee University), nearly 400 black men in Alabama that had syphilis were told they were being treated for “bad blood” and were never informed of the true purpose of the study which was to observe the consequences of untreated syphilis. Even when penicillin became available to treat syphilis in the 1940s the participants in the study were still not treated. The men were followed for many years and the results were documented and published. As late as 1969 when the ethics of the study was being questioned, the Center for Disease Control with the support from local chapters of the American Medical Association and the National Medical Association still argued for continuing the study. The study finally ended in 1972, but by then many of the men had died from syphilis or complications associated with it, many of their wives had been infected, and more than a dozen children had been born with congenital syphilis. While the Tuskegee study can in part be blamed on racism, that explanation falls short when considering the cold war human radiation experiments conducted by the US government. These experiments ranged from exposing individuals to radioactive substances without providing appropriate information or even obtaining consent, to releasing radioactive gases into the environment to study their dispersal over areas that had significant human populations. Another series of unethical experiments were conducted by the CIA under the code names like “MKUltra”, “Artichoke”, or “Midnight Climax” beginning in the 1950s and continuing well into the 1990s. In these experiments conducted in venues ranging from universities to prisons American citizens were exposed to mind-altering drugs like LSD without their consent to study how individuals could be controlled. These experiments demonstrate that when confronted with a threat (in this case the development of nuclear weapons or the potential for mind control capabilities by the Soviet Union), the US government tended to relax or ignore ethical standards and many scientists were willing to go along. However, in some situations the driver behind unethical experiments has just been the desire to answer meaningful scientific questions. Such was the case of the famous “Monster Study” performed in 1939 as part of the Stuttering Research Program at the University of Iowa. In this study, scientists set out to test the theory that stuttering was an acquired behavior as opposed to being of genetic origin. For this they performed an experiment with children at an Iowa orphanage without telling the children that they were going to be involved in a study and misleading the caretakers of the orphanage about the purpose of the study. The researchers divided the children into groups that either received positive reinforcement, which involved commending them for speaking well, or negative reinforcement, which involved criticizing them for any imperfections in their speech. Some of the children that received the negative reinforcement developed speech problems that they retained for the rest of their lives. A more contemporary example of an ethical lapse in experimentation with humans is the creation of babies whose DNA was genetically modified employing the CRISPR technique by the Chinese scientist He Jiankui in 2018. In this case the motivation of the scientist seems to have been to be the first to have done it. The children created with the genetic modifications seem to be healthy at the moment, but their long-term health prospects are unknown. This experiment was universally condemned by the scientific community of both China and the world. The above examples and many others of scientists committing or becoming involved in unethical or immoral acts justify the need of regulations, especially in fields that involve experimentation with human subjects. In the United States and other countries legislation is now in place that regulates scientific experimentation and on top of this there are citizen “watchdog” organizations that independently monitor various aspects of the scientific enterprise. All this is necessary because of the limitations of science. The photograph of the Nazi doctors' trial in Nuremberg taken by US army photographers is currently in the Holocaust Memorial Museum and is in the public domain. The photograph of participants in the Tuskegee Syphilis Study is from the National Archives and is in the public domain.  Cisplatin Cisplatin A long time ago an old professor of mine told me a story. He visited a laboratory which recently had started a line of research involving the study of the pancreas. The lab had been doing this for one year and had generated data that was included in a couple of articles they had published. This was one of those labs where the principal investigator was always travelling, teaching classes, or writing grants, so he delegated a lot of the supervisory work to staff scientists. Unfortunately, one of the staff scientists turned out to have lax standards and did not supervise the graduate students and technicians under him a lot. My professor was touring the lab, and he walked by a technician and a student busy at work who told him they were dissecting a mouse’s pancreas for later analysis. However, when he looked closely, my professor was a bit confused. “What is it again that you are doing?” he asked. “We are removing the pancreas from this mouse”, the technician replied. My professor took a deep breath and awkwardly proceeded to inform them that the organ they had just removed was the spleen, not the pancreas. The lab had been working on the wrong organ! Many scientists tend to focus their attention on getting the big things right, but it is often the overlooked little things that can derail a project. An under researched or not very well thought out approach to an experiment, a lack of focus on the procedures, an improperly calibrated piece of equipment, a sloppy experimental methodology, or a not well-supervised lab hand is all it takes to introduce error into the science. And the problem is that the effort to prevent these mistakes from happening is often boring, repetitive, detail-intensive, unglamorous work that some scientists would rather have others do while they instead devote their time to reading, and discussing and thinking exciting and important ideas. Most mistakes, like the one I described at the beginning of this post, involve a few labs and they typically remain within the realm of the anecdote. However, other mistakes involve a large enough number of labs that they merit publication in the scientific literature. For example, in the cancer research field there is a drug called cisplatin that contains the element platinum and is used in the treatment of many cancers. Cisplatin and several similar compounds are also extensively researched in the lab. One of the issues with cisplatin and related compounds is their poor solubility in water. Because of this, many labs have employed other solvents to be able to make larger amounts of the drug go into solution. Among these solvents is one called dimethylsulfoxide (DMSO), which is often used to solubilize many hard to solubilize substances. The problem with using DMSO to solubilize cisplatin, as was pointed out in an article published in 2014, is that DMSO reacts with the platinum in the drug and inactivates it! The authors of the article found that anywhere from 11% to 34% of published studies in selected cancer journals used DMSO to solubilize cisplatin compromising the interpretation of the results. And the number was really bound to be higher as 26-50% of the studies did not report the solvent employed to solubilize cisplatin. The effect of DMSO on cisplatin had already been reported in the scientific literature 20 years earlier, but it seems that none of these labs were aware of the problem.  Henrietta Lacks Henrietta Lacks The above mistake and others like it are mostly occurrences that are not publicized beyond the complex technical field in which they originated. However, some mistakes involve so many labs, and are of such catastrophic proportions, that they actually find their way beyond the scientific literature and into the popular realm. Such was the case of the HeLa cell contamination. The first cell ever to be cultured was the famous HeLa cell. This cervical cancer cell line is named after the woman from whom the cells were isolated, Henrietta Lacks, who died in 1951. As the first immortal cell line, HeLa cells were sent all over the world and used for many experiments in various fields. Among notable achievements using HeLa cells was the creation of the polio vaccine. Other cell lines were also eventually obtained giving scientists even more tools to study various facets of both pathological and normal cell functions of different organs. The story of Henrietta Lacks and her cells is told in the book The Immortal Life of Henrietta Lacks by Rebecca Skloot.  HeLa Cells HeLa Cells However, as more and more cell culture work was performed, a few scientists including the notable researcher Dr. Walter Nelson-Rees discovered that the cell lines that many researchers were working with were not what they thought they were. The HeLa cells had taken over them! It seems that sloppy cell culture practices, such as working with several cells at the same time to speed up the pace of lab work, had led to contamination. As a result of this mistake, hundreds of published articles became worthless, tens of millions of research dollars were wasted, and several academic careers were derailed. Even though the techniques to authenticate cell lines and cell culture methods have improved significantly, contamination of cell lines by other cells (not just HeLa) still remains a significant problem today. The saga of the HeLa cell contamination is described in the book, A Conspiracy of Cells by Michael Gold. When the general public reads about scientific discoveries, they read about the end product of science. What is not conveyed is the long hours spent making sure the equipment, the procedures, and the people work the way they should. Remember, in science the devil is in the technical details! The photo of Henrietta Lacks from the Oregon State University Flickr webpage is used here under a Attribution-ShareAlike 2.0 Generic (CC BY-SA 2.0) license. The image of HeLa cells by Josef Reischig used under an Attribution-ShareAlike 3.0 Unported (CC BY-SA 3.0) license. The drawing of the formula for cisplatin has been placed in the public domain by Benjah-bmm27.  In this blog I have pointed out that there is a progression in emerging fields of scientific inquiry where competing theories are evaluated, those that do not fit the evidence fall out of favor, and scientists coalesce around a unifying theory that better explains the phenomena they are studying. However, even as a new theory that better fits the available data is accepted in the field, there are individuals who contest the newfound wisdom. Instead of accepting the prevailing thinking, these individuals buck the trend, think outside the box, and propose new ways of interpreting the data. I have referred generically to individuals belonging to this group of scientists that “swim against the current” as “The Unreasonable Men”, after George Bernard Shaw’s famous quote, and I have stated that science must be defended from them. The reason is that science is a very conservative enterprise that gives preeminence to what is established. Science can’t move forward efficiently if time and resources are constantly diluted pursuing a multiplicity of seemingly farfetched ideas. However, this is not to say that the unreasonable man should not be heard. There are exceptional individuals out there who have revolutionary ideas that can greatly benefit science, but there is a time for them to be heard. One such time is when the current theory fails to live up to expectations. I am writing this post because such a time may have come to the field of science that studies Alzheimer’s disease (AD). Alzheimer’s disease is a devastating dementia that currently afflicts 6 million Americans. The disease mostly afflicts older people, but as life expectancy keeps increasing, the number of people afflicted with AD is projected to rise to 14 million by 2050. The disease is characterized by the accumulation of certain structures in the brain. Chief among these structures are the amyloid plaques, which are made up of a protein called “beta-amyloid”. The current theory of AD pathology holds that it is primarily the accumulation of these plaques, or more specifically their precursors, which is responsible for the pathology. Therefore, it follows that a decrease in the number of plaques should be able to alleviate or slow down the disease. This has been the paradigm that pharmaceutical companies have pursued for the past few decades in their quest to treat AD. Unfortunately, this approach hasn’t worked. For the past 15 years or so, every single therapy aimed at reducing the amount of beta-amyloid in the brain has led to largely negative results. In fact, some patients whose brains had been cleared of the amyloid deposits nevertheless went on to die from the disease. Several arguments have been put forward to explain these failures. One of them is the heterogeneity in the patient population. Individuals that have AD often have other ailments that may mask positive effects of a drug. According to this argument, performing a trial with patients that have been carefully selected stands a greater chance of yielding positive results. Another argument is the notion that many past drug failures have occurred because the patient population on which they were tested was made up of individuals with advanced disease. According to this argument, drugs will work better with early-stage AD patients that have not yet accumulated a lot of damage to their brains. Even though many researchers still have hopes that modifications to clinical trials like those suggested above will have the desired effect as predicted by the amyloid theory, an increasing number of investigators are considering the possibility that this theory is more incomplete that they had anticipated and are willing to listen to new ideas and open their minds to the unreasonable man.  One example of these men is Robert Moir. For several years he has been promoting a very interesting but unorthodox theory of AD and getting a lot of flak for it. He dubs his hypothesis “The antimicrobial protection hypothesis of Alzheimer’s disease”. According to Dr. Moir, the infection of the brain by a pathogen or other pathological events triggers a dysregulated, prolonged, and sustained inflammatory response that is the main damage-causing mechanism in AD. In this hypothesis, the production and accumulation of the amyloid protein by the brain is actually a defense mechanism! Dr. Moir agrees that sustained activation of the defense response will lead to excessive accumulation of the amyloid protein and that this eventually will also have detrimental effects. However, even though reduction in amyloid protein levels may be beneficial, accumulation of the amyloid protein is but one of several pathological mechanisms. Moir stresses that the main pathological mechanism that has to be addressed by AD therapies is a sustained immune response, which over time causes brain inflammation and damage. He considers that accumulation of the amyloid protein is a downstream event, and it is known that the brain of people with AD exhibits signs of damage years before any amyloid accumulation can be detected. But much in the same way that Dr. Moir has been promoting his unconventional theory, there are many other theories proposed by others. Oxidative stress, bioenergetic defects, cerebrovascular dysfunction, insulin resistance, non-pathogen mediated inflammation, toxic substances, and even poor nutrition have been proposed as causative factors of AD. This is the big challenge that scientists face when opening their minds to the arguments of the unreasonable man: there is normally not one but many of them! So who is right? Which is the correct theory? And why should just one theory be right? Maybe there is a combination of factors that in different dosages produce not one disease but a mosaic of different flavors of the disease. And maybe the amyloid theory is not totally wrong, but just merely incomplete, and it needs to be expanded and refocused. Or maybe the beta-amyloid theory is indeed right and all that is required for success is to tweak the trial design and the patient population. Maybe, maybe, maybe… When a scientific field is beginning, or when it looks like a major theory in a given field is in need of reevaluation, there always is confusion and uncertainty. Scientists in the end will pick the explanation(s) that better fits the data and take it from there. They did that when most scientists accepted the amyloid theory and they will do it again if this theory is found wanting. The new theory that replaces the amyloid theory will not only have to explain what said theory explained, but it will also have to explain why the old theory failed and what new approach must be followed to successfully treat the disease. In the meantime, Dr. Moir’s theory, along with a few others, is the center of focus of new research evaluating alternative theories to explain what causes AD. The amyloid theory or aspects of it may still be salvageable, but in the field of AD it certainly looks like the time for the unreasonable man has come. Note: after I posted this, I became aware of an article published in the journal Science Advances that proposes a link between Alzheimer's Disease and gingivitis (an inflammation of the gums). The unreasonable men are restless out there! The image is a screen capture from a presentation by Robert Moir on the Cure Alzheimer’s Fund YouTube channel, and is used here under the legal doctrine of Fair Use.The brain image from the NIH MedlinePlus publication is in the public domain.  Old Slave Mart Museum Old Slave Mart Museum I visited Charleston and stopped by the Old Slave Mart Museum. The museum occupies the very same building where black people in the 1850s were sold like animals, often tearing families apart when different family members were sold to different buyers. It is believed to be the last such structure of its kind still standing in South Carolina. The inside of the museum chronicles in detail the evils of slavery and documents how the old city of Charleston was built by slave labor.  Area Occupied by the Morgue Area Occupied by the Morgue The role of slaves in building the city was further expounded upon in a tour of the area behind the Slave Mart which used to have a morgue, a kitchen, and a barracoon (these 3 structures, demolished long ago, were part of a scheme by which slaves to be sold were fed and made healthy before auction to the highest bidder). Standing in the area that used to be occupied by the morgue, the tour guide explained that slaves made the bricks used in the original buildings of the city. To make the bricks, the slaves would insert clay in a mold and then allowed it to dry in the sun. The clay was then removed from the mold in a process where slaves used their hands and sometimes left imprints of their fingers in the clay of bricks that were removed too early.  Eumelanin Eumelanin Being able to insert my fingers in those indentations was a powerful experience. The individual who was forced to make these bricks is dead and long-forgotten, but the marks his fingers made on this brick so many years ago are evidence that he existed. This individual lived a life of servitude under a cruel system that considered him property. People like him made up the economic backbone of the Southern United States, building its cities and towns and growing its crops. It would take a ghastly civil war and more than 500,000 casualties to break this backbone and start the country on the path that would guarantee freedom to blacks in the United States. Racism is a many-layered phenomenon that has multiple proximal causes, but at its most fundamental level racism is probably a by-product of xenophobia, the fear of strangers or those who are different. Xenophobia from a biological point of view grants survival advantages to animals including humans. Keeping close to those who we know (flock, pack, family, tribe etc.) is safer than approaching those we don’t. However, human beings can all too easily make the leap from “different” to “inferior” and from there to “not worthy of fundamental rights or respect”. But what differences can make someone “inferior” in the eyes of another? There has been a lot of controversy regarding things like intelligence testing or cranial capacity and how the different races fare against each other when evaluated by these metrics. I am not going to discuss these here because the vast majority of racist people do not make the primary decision to discriminate based on these metrics. Even though there are several differences between white and black individuals, the most obvious trait based on which a white person makes the decision to discriminate against a black person is that which makes them most different: the color of their skin. What is responsible for skin color? The color of the skin is due to molecules called eumelanin and pheomelanin that are produced in cells in the skin called melanosomes. The number of these cells and the ratio of eumelanin to pheomelanin determine skin color. Eumelanin is the darker pigment and protects the skin from the harmful ultraviolet rays of the sun that can produce cancer. Scientists believe that eumelanin evolved to protect the skin as an adaptation to life in the tropics when the ancestors of human beings lost their bodily hair. At the same time, however, sunlight is required for the human body to manufacture vitamin-D, which is necessary for life. As human beings with dark skin migrated from the tropics to northern latitudes where sunlight is not as strong and the body has to be covered to preserve heat, black skin became a hindrance to vitamin-D production. This in turn favored the advent of human beings whose skin produced less melanin and was lighter in color. Science cannot make value judgements because that is simply not its nature. Science cannot tell us that discrimination or slavery (or anything else for that matter) is “wrong”. However, science does have tools that allow for examination of arguments within logical frameworks. As a scientist, when I hear someone expound racist ideas arguing that people with black skin are somehow inferior to people with white skin, what I hear is that just because the concentration of eumelanin in your skin is higher, that makes you somehow inferior to others that have lower levels of this molecule. To me this does not make sense. Why should having higher levels of eumelanin in an organ like the skin make you inferior to others? The biochemical and physical properties of eumelanin have been extensively studied and, besides its relationship to cancer and vitamin-D, there is nothing in this molecule whatsoever that is any way connected with a possible physical or mental handicap (if that is what is meant by the word “inferior”). The whole premise is absurd.

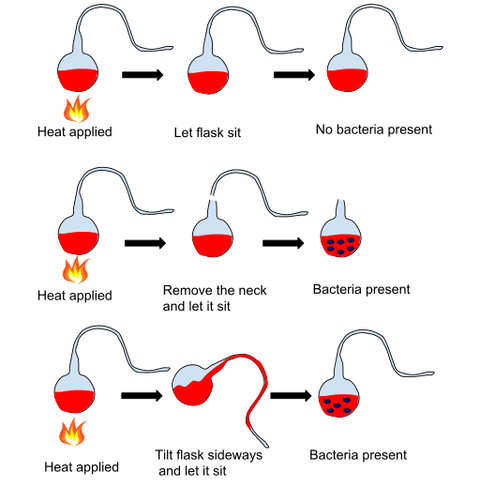

Xenophobia may form part of our inherent biological programing and may make us prone to discriminate against others, but the impulses that arise from this ancient programing can be channeled in positive ways and modified by education to produce adults that judge people by their character and not the color of their skin. The image of eumelanin is in the public domain. The pictures are by the author and may be used with permission. There is an old science joke where a scientist trains a spider to jump when a bell is rung. The scientist then removes a leg from the spider under anesthesia and makes sure the spider heals. The scientist proceeds to ring the bell again. Even with one less leg, the spider manages to jump in response to the sound. The scientist repeats this procedure removing a leg each time and ringing the bell, and the spider manages to “jump” in response (at least as best as it can). This goes on until the spider is legless. After repeatedly ringing the bell and getting no response, the scientist concludes that the spider without legs becomes deaf! What is ironic about this silly joke is that scientists have recently discovered that indeed the spider's ability to detect sound is based on hairs present on its legs. So you see, the scientist in the joke arrived at the right conclusion (legless spiders become deaf) although for the wrong reasons! The above got me thinking about whether there are any examples of real scientists who got it right but for the wrong reasons. As it turns out there are a several examples, and one of the most famous of these is the French Chemist and microbiologist Louis Pasteur and the idea of spontaneous generation. In the 19th century, the notion of spontaneous generation came under attack. This ancient notion posited that life continuously arises from non-life. At the time, the fact that nutrient broths exposed to the air would develop microorganisms was considered evidence for spontaneous generation. However, although a consensus had begun developing that life could only arise from preexisting life, and that the microorganisms that swarmed in nutrient broths represented nothing but airborne contamination, many scientists still defended the idea of spontaneous generation.  Into this scene entered Pasteur, and he performed his famous experiments where he boiled sugared yeast-water in a flask that remained exposed to the environment through a very long neck (swan-necked flasks). Pasteur found that the nutrient mix inside the flasks remained uncontaminated, presumably because any airborne organisms stuck to the neck of the flasks, but as soon as he broke the neck of the flasks or tilted them, bacteria and mold stated growing. He repeated these experiments with sterile liquids that did not have to be boiled, like blood or urine obtained directly from the bladder, and attained the same result. To great acclaim by the scientific community he declared that he had demonstrated that only germs in the air can produce the growth observed in these nutrient mixes, therefore life can only arise from life. However, a few scientists challenged Pasteur. These scientists, using different nutrient mixes like hay infusions or potash solutions derived from urine, found that microorganisms arose in nutrient broths even after they had been boiled and isolated from the environment in a manner similar to Pasteur’s procedure. They claimed their results proved that, at least in these different nutrient infusions, life arose by spontaneous generation and challenged Pasteur to repeat their experiments publicly. What did Pasteur do? He ignored their results, or dismissed them claiming they were due to sloppy methodology, and refused to repeat their experiments. Pasteur prevailed thanks to his connections, his fame, and for being against spontaneous generation at the right time. As far as he and his supporters were concerned the matter had been settled. So who was right? Even though Pasteur acted very unscientifically in addressing his critics, he was indeed right, but for the wrong reasons. Spontaneous generation does not occur, but Pasteur was wrong to ignore or dismiss his critics’ results, because these results were true. It would be more than a decade after Pasteur performed his experiments before it was understood that there are bacteria that produce spores which can survive heating at very high temperatures. It is likely that Pasteur’s infusions of sugared yeast-water did not contain these bacteria, but that the infusions of hay and potash employed by his critics had these spore-forming bacteria. Therefore, even after boiling these infusions at high temperature, Pasteur’s critics found these nutrient mixes became contaminated when the spores became active after the temperature had returned to normal. The critics were wrong in claiming their results supported spontaneous generation, but at the time the complete truth was not known, and there was enough ignorance to go around.  Pasteur Pasteur Ironically, if Pasteur had behaved like a true scientists and repeated his critic’s experiments, he would have had to face conflicting evidence that could have led him to admit that spontaneous generation was possible in some cases. This could have muddied up the field and hindered progress, but by ignoring valid data, he avoided an incorrect interpretation, and arrived at the right conclusion. Such is the complexity of the interaction between reality and the human mind in the context of scientific discovery. Pasteur moved on to greater accomplishments (and more controversy, but that’s another story) where he was proven right many times. Today he is remembered for the process to prevent bacterial growth in substances like wine and milk (pasteurization), his many contributions to the prevention of disease which saved countless lives, and the founding of the Pasteur Institute which over the years has had a huge impact on health science worldwide with 10 of its scientists being awarded Nobel Prizes. Nevertheless, in the case of spontaneous generation, he was right but for the wrong reasons! My reference for Pasteur’s story is John Waller’s excellent book: Fabulous Science. Image of Pasteur’s experiment by Kgerow16 is used here under an Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) license. The image of Pasteur by Paul Nadar is in the public domain. Most scientists have it easy. By this I don’t mean that science is easy, but rather that scientists experiment on animals or OTHER people. Sure, these experiments are conducted following ethical guidelines to minimize pain to laboratory animals or to ensure the safety of patients, but the point of my argument is that it’s easy to administer a treatment that makes the entity receiving said treatment sick, or that carries other risks, when that entity is not yourself. However, throughout the history of science some scientists have broken through the wall of security that separated them from their test subjects and became their own lab rats, their own patients. These scientists who experimented on themselves conducted what I call “heroic science”. Let’s look at some of these characters.  Barry Marshall Barry Marshall In a previous post, I mentioned the case of the Australian physician Dr. Barry Marshall who wanted to convince skeptical fellow scientists that ulcers were not caused by excessive stomach acid secretion due to stress, but rather by a bacteria called Helicobacter pylori. Unable to develop an animal model or to obtain funds to perform a human study, he experimented on himself by drinking a broth containing Helicobacter Pylori isolated from a patient who had developed severe gastritis. He developed the same symptoms as the patient and was able to cure himself with antibiotics. As a result of this and other studies, Dr. Marshall was awarded a Nobel Prize in 2005. Another of these heroic individuals was Werner Forssmann, who as a resident in cardiology in a German hospital wanted to try a procedure to insert a catheter through a vein all the way to the heart. Forssmann was convinced that if this could be done, it would allow doctors to diagnose and treat heart ailments. However, he could not obtain permission from his superiors to perform the experiment on a patient, so he tried it on himself. He made an incision in his arm, inserted a tube, and guiding himself with X-ray photography, he pushed the tube all the way to his heart. At the time there was a lot of opposition to Forssmann and his unorthodox methods, and although he persevered for some time, he became a pariah in the cardiology field and was forced to switch disciplines becoming a urologist. However, eventually other scientists refined the technique of catheterization described in his work, and developed it into valuable medical procedures that have saved many lives. Forssmann had the last laugh when he was awarded the Nobel Prize in 1956. Most heroic science studies didn’t lead to a Nobel Prize, but some resulted in useful information. For example, John Stapp was an air force officer who experimented on himself to test the limits of human endurance in acceleration and deceleration experiments. He would be strapped to a rocket that would rapidly accelerate to speeds of hundreds of miles per hour and then stop within seconds. As a result of these brutal experiments, Stapp suffered concussions and broke several bones, but he survived, and the knowledge generated by his research eventually resulted in technologies and guidelines that today protect both car drivers and airplane pilots. Despite its appearance of recklessness, heroic science is seldom performed in a vacuum, but rather it is performed by individuals who believe that, based on other evidence, nothing will happen to them.  Joseph Goldberger Joseph Goldberger Such was the case of Dr. Joseph Goldberger. In the early 1900s, the disease Pellagra afflicted tens of thousands of people in the United States. Dr. Joseph Goldberger performed experiments that indicated that Pellagra was a disease that arose due to a dietary deficiency rather than a germ. Faced with recalcitrant opposition to his ideas, Goldberger and his assistants injected themselves with blood from people afflicted with Pellagra and applied secretions from the patient’s noses and throats to their own. They also held “filth parties” where they swallowed capsules containing scabs obtained from the rashes that patients with Pellagra developed. None of them developed Pellagra. This along with other evidence demonstrated that Pellagra was not a disease carried by germs. Another case was that of the American surgeon Nicholas Senn, who in 1901 implanted under his skin a piece of cancerous tissue that he had just removed from a patient. As he expected, he never developed cancer. Senn did this to demonstrate that cancer is not produced by a microbe, as it is not transmissible from one human to another, although at the time there were many pieces of evidence that taken together indicated that this was the case. Of course, the mere fact that you perform heroic science doesn’t mean that you will reach the right conclusions.  Max von Pettenkofer Max von Pettenkofer Back in 1892 the German chemist Dr. Max von Pettenkofer disputed the theory that germs caused disease, and specifically that a bacterium called Vibrio cholerae caused the disease cholera. He requested a sample of cholera bacteria from one of the most prominent proponents of the theory, Dr. Robert Koch (who won the Nobel Prize in 1905), and when he got the sample he proceeded to ingest it! Pettenkofer fell slightly sick for a while, but did not develop cholera. He claimed that this proved his point, but the vast majority of the evidence generated by others indicated he was wrong, and his claim was never accepted. A rather remarkable example of misguided heroic science is the work of Doctor Stubbins Ffirth. This individual studied the incidence of Yellow Fever cases in the United States back in the 1700s and noticed that Yellow Fever was much more prevalent during the summer months. Thus he developed the notion that Yellow fever was due to the heat stress of the summer months, and that therefore it was not contagious. To prove this he embarked on a series of gross experiments where he exposed himself to the bodily fluids of Yellow Fever patients. He drank their vomit, he poured it in his eyes, he rubbed it into cuts he made in his arms, he breathed the fumes from the vomit, and he also smeared his body with urine, saliva, and blood of Yellow Fever patients. Since he never contracted the malady, he concluded that Yellow Fever was not contagious. However, not only did Ffirth employ bodily fluids from late-stage Yellow Fever patients whose disease we now know not to be contagious, but he also missed the fact that Yellow Fever is transmitted by mosquitoes (see below), which is the reason why it’s more prevalent during the summer! And finally, some of the scientists who engaged in heroic science suffered or died as a result of their experiments, but their sacrifice saved lives or resulted in advancements in the understanding of terrible diseases as the following two cases show.  Jesse Lazear Jesse Lazear In 1900 the army surgeon Walter Reed and his team in Cuba put to test the theory that Yellow fever was spread by mosquitoes, which at the time was not taken seriously by many scientists. They had mosquitoes feed on patients with Yellow Fever and then allowed the mosquitoes to bite several volunteers, among whom were two members of Reed’s team, the American physicians James Carroll and Jesse Lazear. Several of the people bitten by these mosquitoes developed Yellow Fever including Carroll and Lazear. Lazear died, but Carroll recovered, although he experienced ill health for the rest of his life. After this demonstration that Yellow Fever was transmitted by mosquitoes, a program of mosquito eradication was implemented that succeeded in dramatically reducing the cases of this disease. In the Andes in South America there is a disease called Oroya Fever that periodically decimated the population in some localities. In the 1800s many physicians suspected that this disease was connected to another condition that led to the production of skin warts (Peruvian Warts), but no one had ever demonstrated they were connected. Daniel Alcides Carrión, a student of medicine in the capital of Peru, Lima, set out to prove that these two diseases were the same. He removed a wart sample from a patient with the skin condition and inoculated himself with incisions that he made in his arms. Carrión developed the symptoms of Oroya Fever thus demonstrating that these two diseases were different stages of the same disease, which is now called Bartonellosis. Unfortunately, he died from the disease, but he is hailed as a hero in Peru.

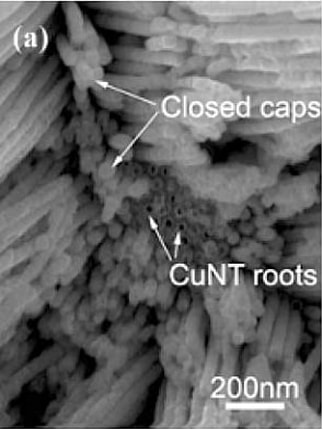

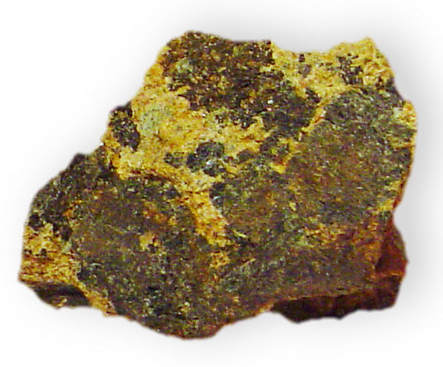

The above are but a fraction of the cases of individuals who risked life and limb performing heroic science. Many people criticize the usefulness of most cases of heroic science, especially when it just involves a sample size of “one”, and these critics have a point. In the end, heroic science should be held up to the same standards of rigor as regular science. However, whether those that experimented on themselves did it out of need to overcome bureaucratic obstacles, the belief in the correctness of their ideas, scientific curiosity, or because they were crazy, you always have a certain degree of admiration for the individuals who put their lives and health on the line for a scientific idea. They are willing to cross a line of security that most researchers wouldn’t dare to cross. The Photograph of Barry Marshall by Barjammar is in the public domain. The photograph of Dr. Goldberger made for the Centers for Disease Control and Prevention is in the public domain. The photograph of Max von Pettenkofer is in the public domain in the US. The photograph of Jesse Lazear from the United States National Library of Medicine is in the public domain. A warning to my more sensitive readers: This post contains some rude terms and juvenile humor! “Get you mind out of the gutter!” is a common exhortation used on a person who interprets things or thinks about things in indecent ways. In our culture, having your mind in the gutter is clearly considered to be something negative. However, sometimes having your mind in the gutter, or having someone around with their mind in the gutter, can actually be an advantage. How can this be? A joke in the biotech industry involves the owner of a genetics company who is thinking about the name he should give to a new branch of the company that he is planning to open in Italy. The owner innocently decides that because his company is a genetics company, and because the new branch is opening in Italy, he will call the new branch of the company "Gen-Italia". It is in situations like these where having someone around with their minds in the gutter can be useful to spot the vagaries of language and slang. The above is just a joke, but consider the true story of a company called “Custom Service Chemicals”. Back in 1964 they paid a marketing firm to suggest a new name for them. The marketing firm came up with a combination of the words “Analytical” and Technology” which perfectly described what the company was doing, and thus they changed their name to “Analytical Technologies”, which they fatefully proceeded to abbreviate to “AnalTech”. Apparently no one involved in the name-change operation had their mind in the gutter to spot the obvious problem with the new name. As a result of this, the company has been the butt of jokes (get it?) for all of its existence culminating in one memorable episode in 2017 when newspapers reported that a truck had plowed into AnalTech creating a hole which released an odor that led to a HazMat situation. The company has sometimes complained about the puerile humor it is subject to, but they get no sympathy from me!  Oh no you don't! Oh no you don't! However, the AnalTech story is nothing compared to the fiasco that beset no other than the company Pfizer. Pfizer is a pharmaceutical giant with 90,000 employees selling products in 90 international markets and generating billions of dollars in profits. This is the company that brought us penicillin and Viagra. Pfizer normally functions like a well-oiled machine when it comes to advertising their drugs, but in 2012 they experienced a major breakdown. At the time Pfizer had come up with a drug for osteoarthritis in dogs called Rimadyl, and they wanted to put together a marketing campaign to promote it. Unfortunately there was no one in their marketing team with their mind in the gutter to enlighten them. Thus it was that to encourage people to use Rimadyl on their ailing dogs, they invited their customers to “rim-a-dog”; in other words, apply Rimadyl to their canine friends. Pfizer even created a long-gone website: rimadog.com. Alas, if someone had even made a cursory search of possible slang meanings of the verb “to rim” they would have averted the catastrophe. In response to the public outcry from dog lovers, Pfizer apologized and modified their marketing of Rymadyl, but it was too late. They had already left a permanent mark in the annals (sorry, couldn’t resist using that word) of botched marketing campaigns. Sometimes what happens is that the people involved in coining a problematic name are foreign individuals who are not well acquainted with American slang. The Hungarian Nobel Prize winner Albert Szent-Györgyi discovered vitamin-C and found that paprika contained large amounts of it. This was a godsend to the depressed economy of the Hungarian town of Szeged where Szent-Györgyi resided in the early 1930s. Merchants and scientists joined forces to produce and sell a paste derived from paprika which was christened “vitapric”. Sales of the paste were so good in Europe that Szent-Györgyi and his colleagues wanted to sell vitapric in the United States. Luckily for them, someone with their mind in the gutter advised them to change the name of the product!  However, a group of Chinese researchers were not that fortunate. Dachi Yang, Guowen Meng, Shuyuan Zhang, Yufeng Hao, Xiaohong An, Qing Wei, Min Yeab and Lide Zhang discovered a method to make very small tubes (nanotubes) using metals like bismuth and copper. To name their creations they used the abbreviation for the word nanotubes “NT” preceded by the chemical symbols of bismuth (Bi) and cooper (Cu). Thus they referred to bismuth nanotubes as “BiNTs”, and cooper nanotubes as “CuNTs”. But what really cemented the fame of this Chinese group for posterity was that they wrote up the results and got them published in a mainstream English language scientific journal. Thus in their paper entitled “Electrochemical synthesis of metal and semimetal nanotube–nanowire heterojunctions and their electronic transport properties” you find no less than 12 references to “BiNT/s” and 50 references to “CuNT/s”. It seems that not a single person with their mind in the gutter was involved in the process of review and editing of the paper. Adding insult to injury, the Chinese research group received the 2007 Vulture Vulgar Acronym (VULVA) award from the British technology news and opinion website The Register. Of course, it’s not always a foreigner unacquainted with English slang who dreams up these terms. In 1972 doctors Alexander Pines, Michael Gibby, and John Waugh developed a new procedure for high resolution nuclear magnetic resonance (NMR) of rare isotopes and chemically dilute spins in solids. This was a significant breakthrough because up to that moment NMR could only be applied to liquids. But then the authors proceeded to name their new technique: Proton Enhanced Nuclear Induction Spectroscopy. The problem with this name is, of course, the acronym. These naughty scientists went on to publish scientific articles where the name of the technique was prominently displayed in the tittle. It seems that no reviewer or editor with their mind in the gutter caught on to the joke. For the sake of decency most scientists nowadays refer to the technique as “cross-polarization”. While the name for the NMR technique was intended, some rude acronyms may have just arisen unintentionally from names put forward by people with insufficiently dirty minds. Thus the short denomination for the enzyme argininosuccinate synthetase is “ASS”, the cell culture substrate poly-L-ornithine is abbreviated as “PORN”, and the muscle relaxant and murder weapon, Suxamethonium chloride, is called “Sux”. Some scientific disciplines have certain traditions or follow some methodologies that make them prone to generating silly or rude names.  Cummingtonite Cummingtonite In geology there is a custom of naming a new mineral by adding the suffix “-ite” (a word derived from Greek meaning rock) to whatever the mineral is named after. But this tradition can create problems when there is no one around with their mind in the gutter. For example, when a new mineral made up of magnesium, iron, and silicon was found in the locality of Cummington in Massachusetts it was christened “Cummingtonite”, when a new mineral made up of calcium, silicon, and carbonate was discovered in a mine named “Fuka” in Japan, it was branded “Fukalite”, and a mineral made up of silicon and aluminum was named “Dickite” in honor of chemist Allan Brugh Dick who discovered it. In chemistry, when a new chemical compound is isolated from a plant, often the name of the particular chemical form of the compound is combined with the name of the genus or the species of the plant. The problem is that several plants do not have the most innocent of names, and there seem to be either a dearth of chemists or the people who vet these names with minds in the gutter. Thus “vaginatin” was isolated from the plant Selinum vaginatum, “erectone” was isolated from the plant Hypericum erectum, “clitoriacetal” was isolated from the plant Clitoria macrophylla, “nudic acid” isolated from the plant Tricholomo nudus, and so on.

I could go on, but from all these examples you get the idea. So next time someone is chastised for having their mind in the gutter, intervene and quote a few of these examples to make the case for the usefulness for such a mind in science! I have taken some of the examples listed in this post from Paul May’s amazing webpage “Molecules with Silly Names” and combined them with my own research. Mr. May has also written an eponymous book that can be bought on Amazon. Dog image by Calyponte used here under an Attribution-Share Alike 3.0 Unported license. The image from the copper nanotube article is a screen capture used here under the legal doctrine of Fair Use. The image of Cummingtonite by Dave Dyet is in the public domain.  When people say or write things like “nothing is impossible”, they normally mean this as a motivational slogan intended to overcome life’s difficulties. They don’t literally believe that nothing is impossible, but rather that talent, focus, hard work, and dedication can overcome seemingly insurmountable obstacles to achieve success. And you know what? I’m fine with that. It may not be accurate, but I understand that people may need a little oomph in their lives. If some accuracy needs to be sacrificed to help people succeed, I am willing to look the other way, so to speak. However, some people actually believe that the maxim “nothing is impossible” is true, and not only in the realm of personal achievements, but also in the field of science. I have had a few discussions with these mystics that left me wishing that I had applied Alder’s Razor. I have tried to explain that our world functions based on a set of rules that clearly delineate what is and what isn’t possible, and that science is in the business of finding what these rules are. In response to this, I normally get a list of things that scientist thought to be impossible that were later demonstrated to be possible, along with comments like “scientific theories have been proven false again and again” and some interspersed subtle and not so subtle hints that my mind is not sufficiently open. To these criticisms, I answer that science sometimes moves forward by trial and error, and, like these critics point out, scientists have made mistakes or have underestimated the complexity of the phenomena they were studying. But, as more knowledge was generated and explanations were refined, sufficiently developed scientific theories were established that allowed accurate discrimination of the possible from the impossible. Additionally, I have already pointed out not only that the vast majority of scientific theories have not been proven false, but that there are dangers in keeping your mind too open.

Nevertheless, the more important point, that seems to be ignored by the “nothing is impossible” crowd, is that our very lives depend on knowing what is possible and impossible in the world around us. Think about it. When we walk on a cement surface, we know that it will not suddenly turn into quicksand and swallow us up. When we approach a tree, we know it will not suddenly uproot itself and attack us. When a cloud passes over us, we know it will not suddenly turn to lead, fall, and crush us. We have a very clear understanding of how cement surfaces, trees, clouds, and myriads of other things work, and we know with absolute certainty what they can and cannot do. We know what can and cannot happen. We know what is possible and what is impossible. If this understanding of how our world works were false, our lives would be in peril. But our capacity to gain this understanding is nothing new or even limited to the human species. In nature we observe that animals also gain an understanding of how their surroundings work through individual experience and from observing other animals. They develop an understanding of what is edible and what isn’t, of what prey is safe to attack and what prey is dangerous, of what places are safe to be in and which are not, and so on. There is a rhyme and reason to this understanding that animals gain. They grasp that certain things are possible and other not, and they exploit this knowledge to negotiate the complexity of their environments and survive. The animal ancestors of humans acquired this information like other animals through their individual experience and from observing others. However, as ancient humans developed the capacity to think and communicate to an extent that was orders of magnitude higher than that of their animal kin, the knowledge they derived from experience became insufficient. There were many scary things happening in the world that they did not understand and could not control like earthquakes, storms, volcanoes, droughts, pests, and disease that could dramatically affect their lives. And there were also other things like eclipses, comets, shooting stars, or the moon acquiring a red hue that were mysterious. Ancient humans were able to formulate questions. Why did these things happen? Was there something or someone making them happen? How can I prevent bad things from happening? How can I be spared? Ignorance and fear begat superstition and the belief in the supernatural in order to allow humans to make sense of their surroundings and gain a measure of control over their existence. But then some humans started investigating how the world around them worked. They observed. They experimented. They found regularities and patterns. They asked and answered questions and made predictions based on the answers, which they then refined from experience. Science was born. And science was able to deliver explanations regarding the nature and inner workings of those scary things and those mysterious things allowing us to understand them, and in many cases control them, or at least reduce their detrimental effects on our lives. Viewed from this vantage point, science is just merely a more effective way of obtaining the information that we once obtained through experience. Knowledge of what is possible and not possible is key to our survival, and science has been the most successful way in which we gave gained this information. To those who say “nothing is impossible” I just have one word: balderdash! Image from Pixabay is free for commercial use (Creative Commons CC0) |

Details

Categories

All

Archives

April 2024

|

RSS Feed

RSS Feed